How to restore websites from the Web Archive - archive.org. Part 1

Web Archive Interface: Instructions for the Summary, Explore, and Site map tools.

In this article we will talk about the Wayback Machine itself and how it works.

For reference: Archive.org (Wayback Machine - Internet Archive) was created by Brewster Cale in 1996 about at the same time when he founded Alexa Internet, a company that collects statistics on website traffic. In October of that year, company started archiving and storing copies of web pages. But the current form called WAYBACKMACHINE, that we can use now, started only in 2001, although the data has been saved since 1996. Web archive advantage for any website is that it saves not only the html-code of the pages, but also other file types: doc, zip, avi, jpg, pdf, css. A complex of html-codes of all page elements allows you to restore the site in its original form (on a specific indexing date when web archive spider visited the site’s pages).

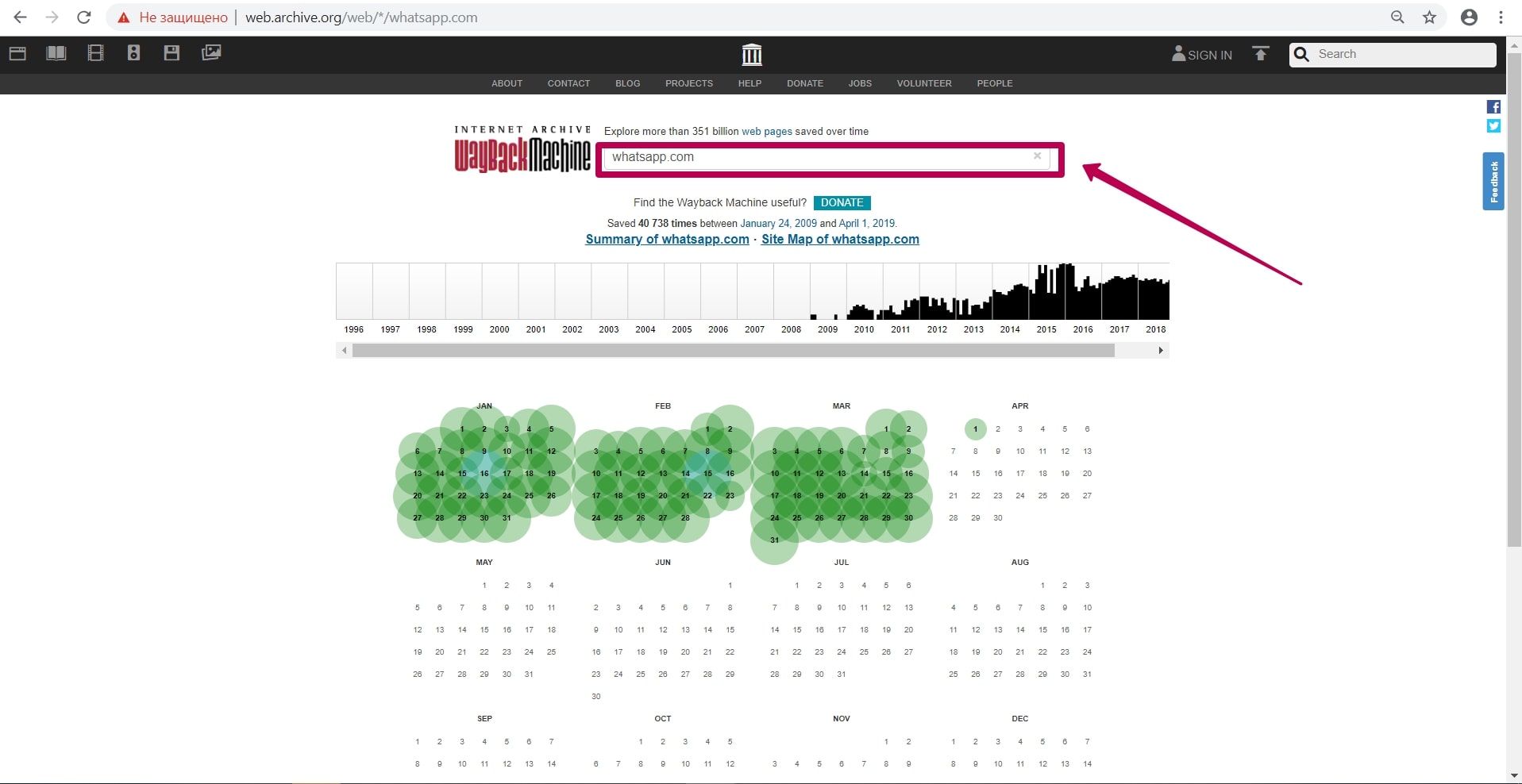

So, archive is located at http://web.archive.org/. Let’s touch on web archive possibilities on the example of a well-known website as WhatsApp.

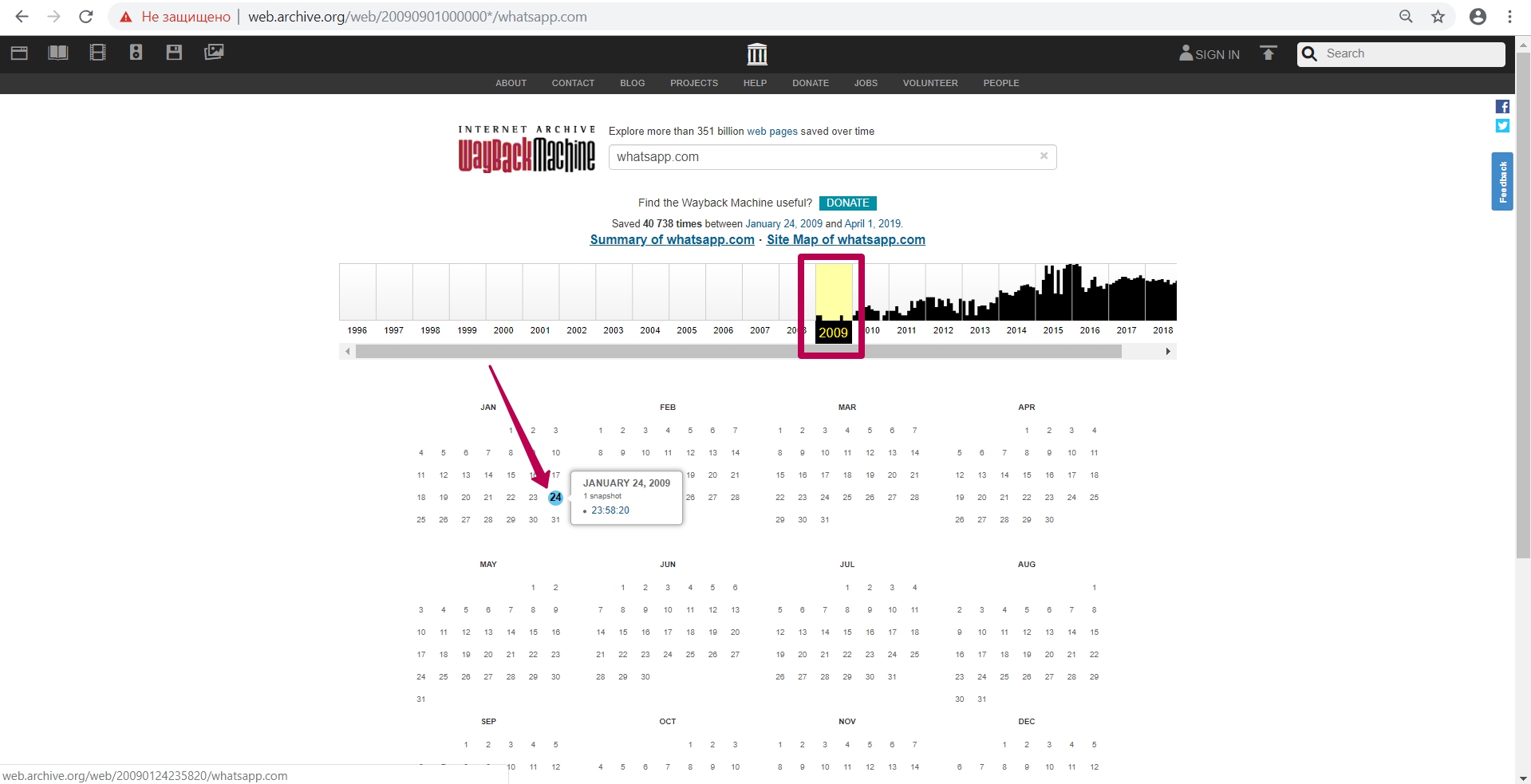

Enter website domain on the main page in the search field. In our case it will be whatsapp.com.

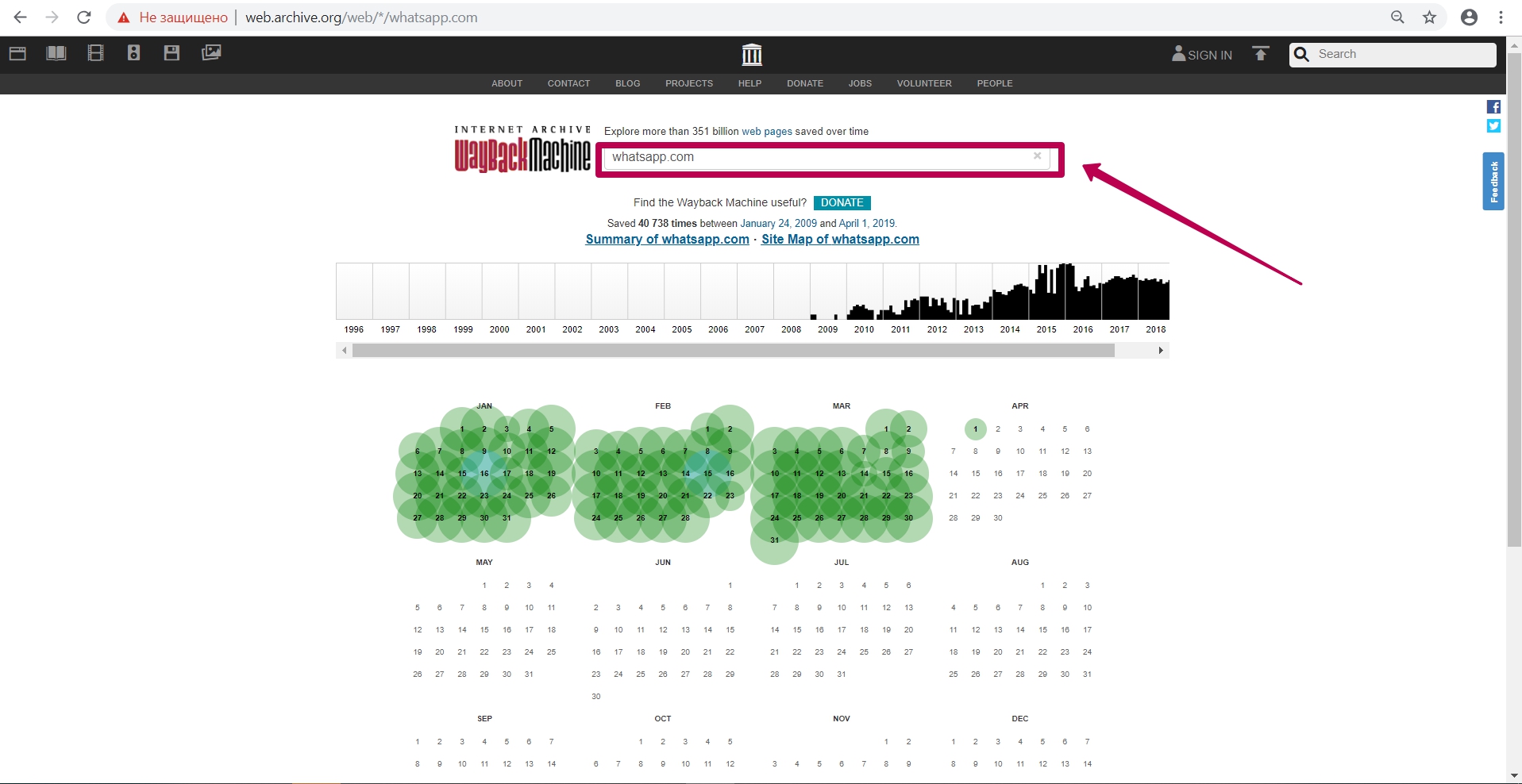

After entering website link, we see the calendar of saving html code of the page. On this calendar, we see notes in different colors on the save dates:

Blues means server valid code 200 response (no errors from the server);

Red (may be yellow or orange, depending on the browser and PC operating system) means 404 or 403 error, something that is not interesting when restoring;

Green stands for pages redirect (301 and 302).

Calendar colors do not give a 100% guarantee of compliance: on the blue date there can a redirect as well (not at the header level, but, for example, in the html code of the page itself: in the refresh meta tags (screen refresh tags) or in JavaScript).

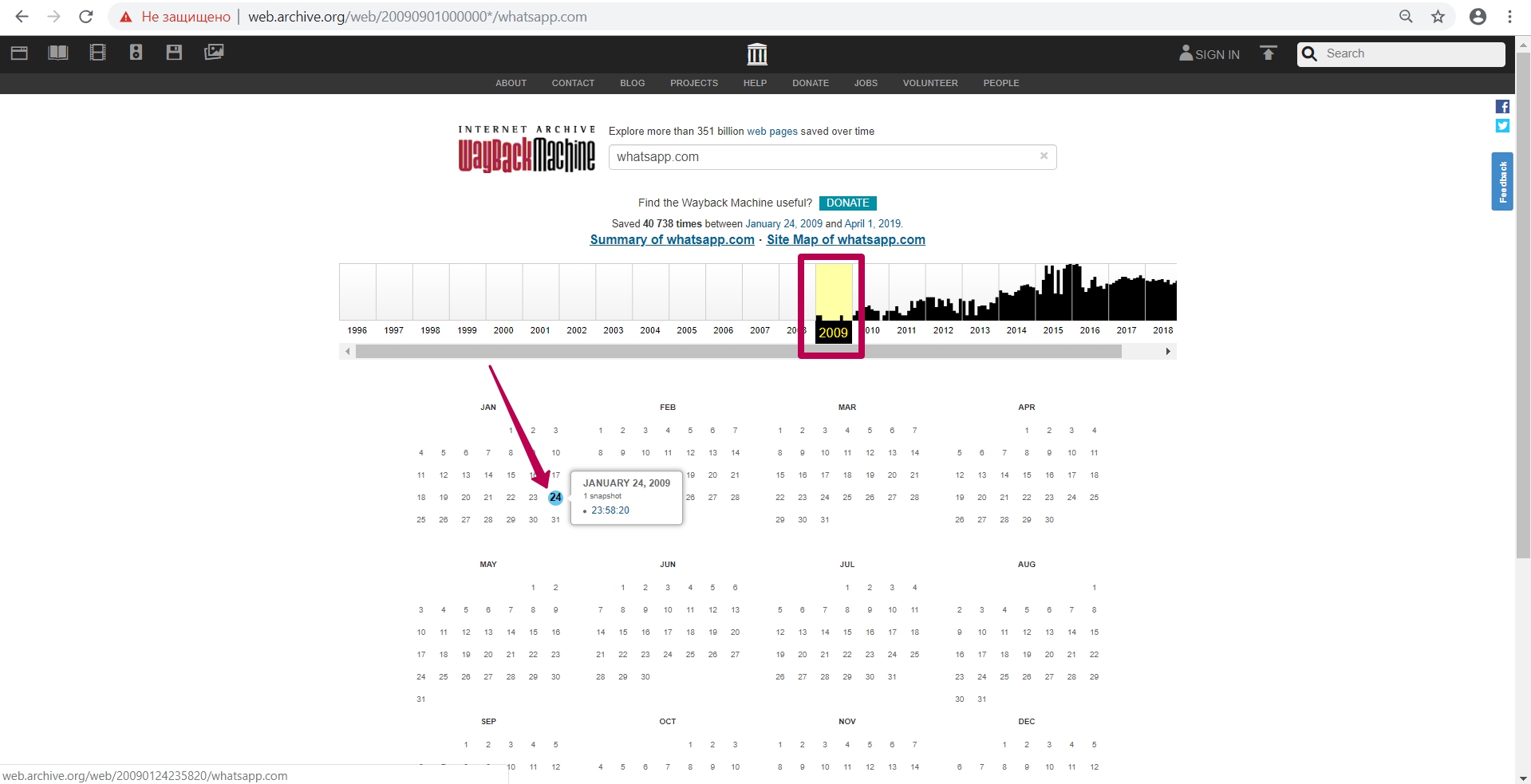

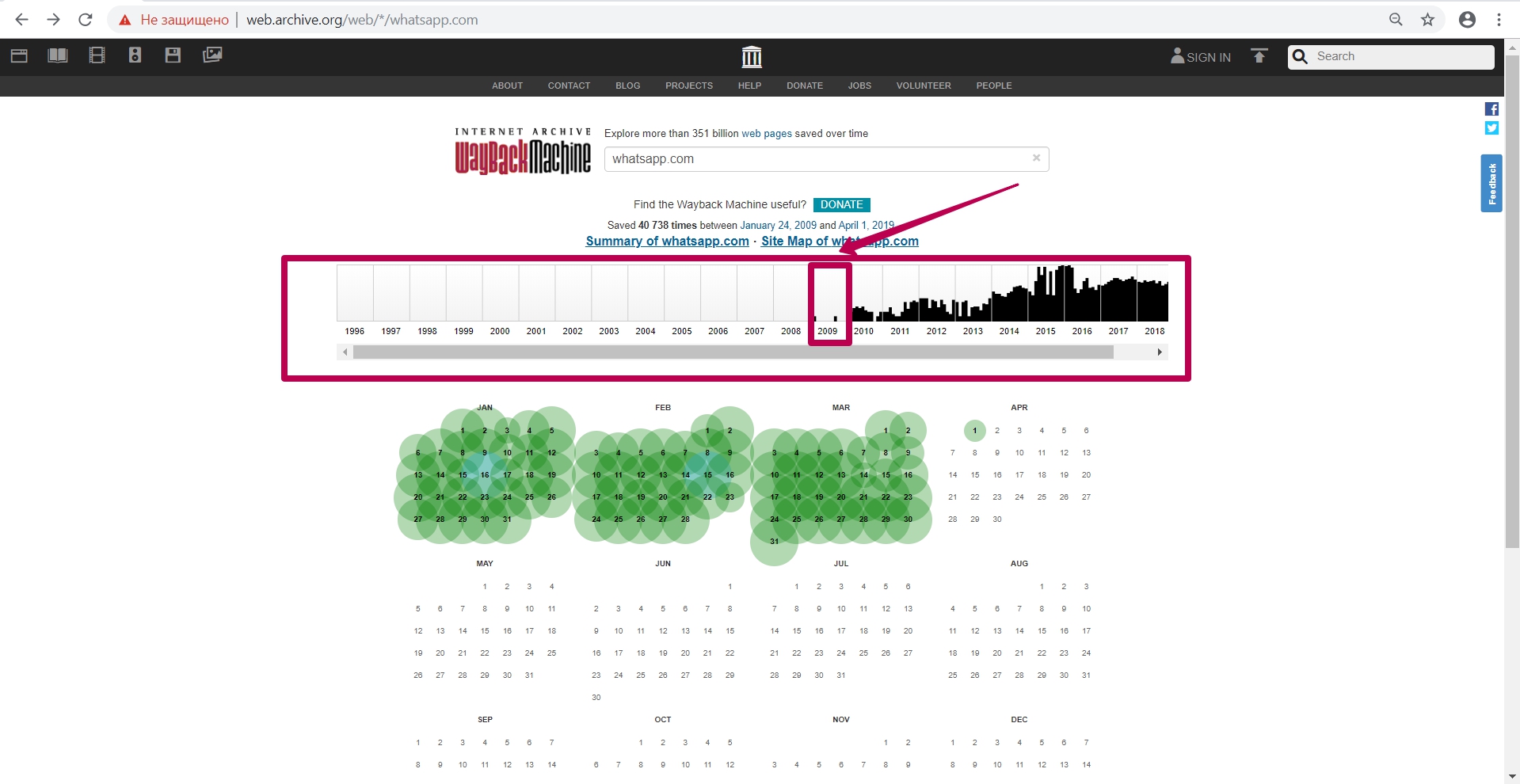

Let’s go to 2009, when this website indexing (saving) started.

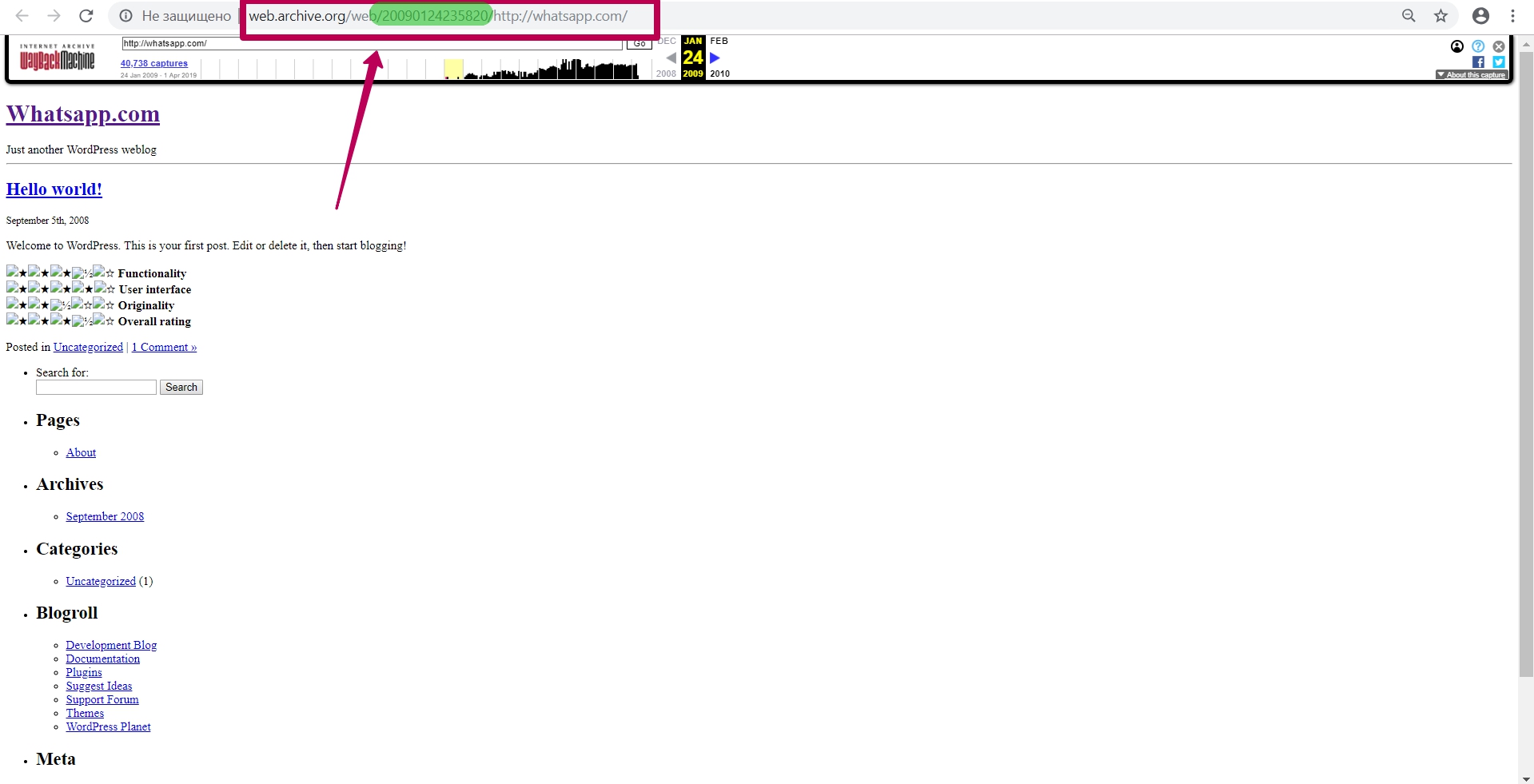

We see the version dated January 24, and then we open it in a new tab (in case of errors during operation, it is better to open the web archive tool in incognito mode or in another browser).

So, we see the 2009 version of the WhatsApp page. In the page url we see the numbers called timestamp, i.e. year, month, day, hour, minute, second, when this url was saved. Timestamp format (YYYYMMDDhhmmss).

Timestamp is not the time website copy saving, not the time of page saving, but this is the time of specific file saving. This is important to know when restoring content from a web archive. All website elements as pictures, styles, scripts, html code and so on have their own timestamp, that is, archiving date.

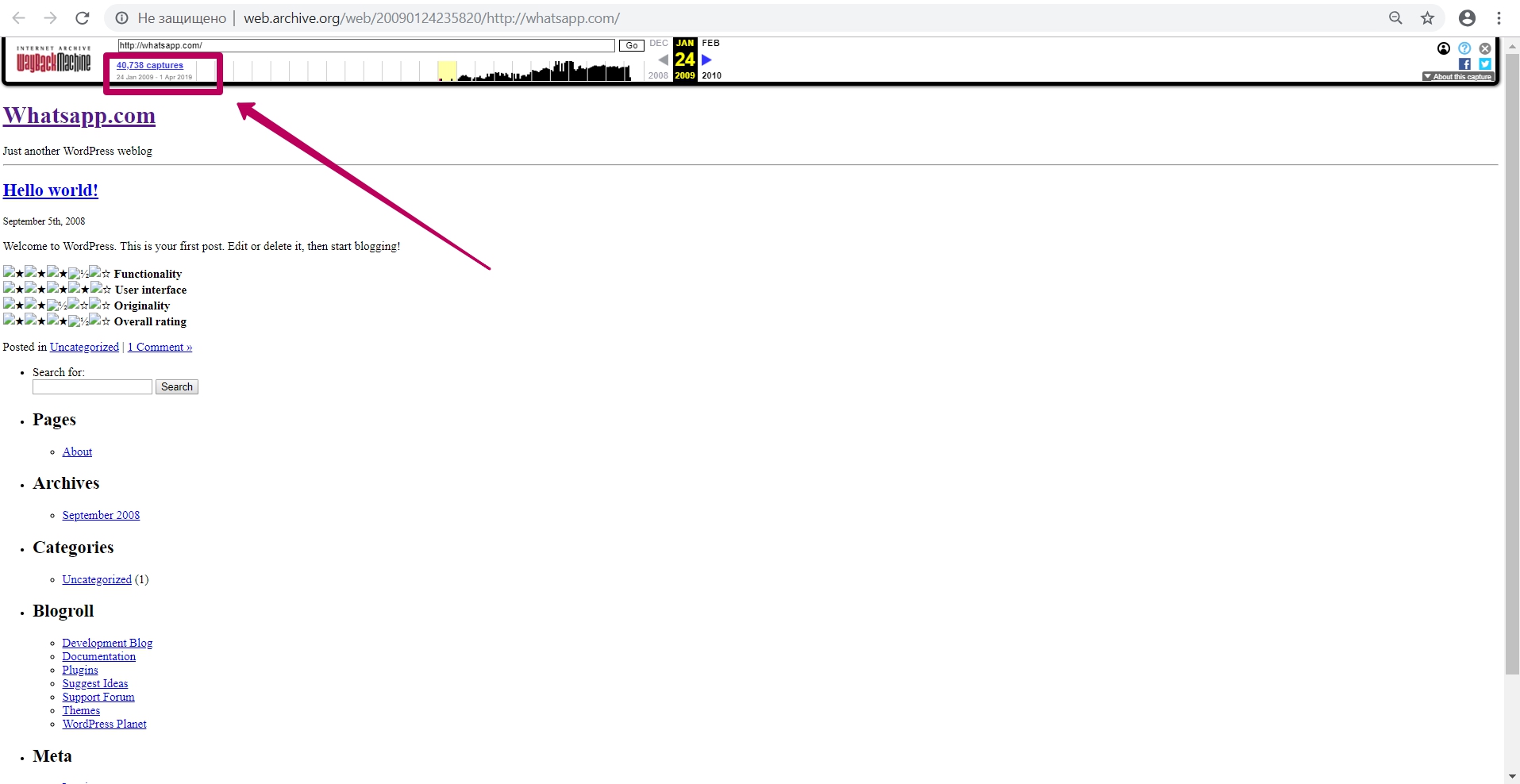

In order to return from website page to the calendar, click on the link with captures number (page captures).

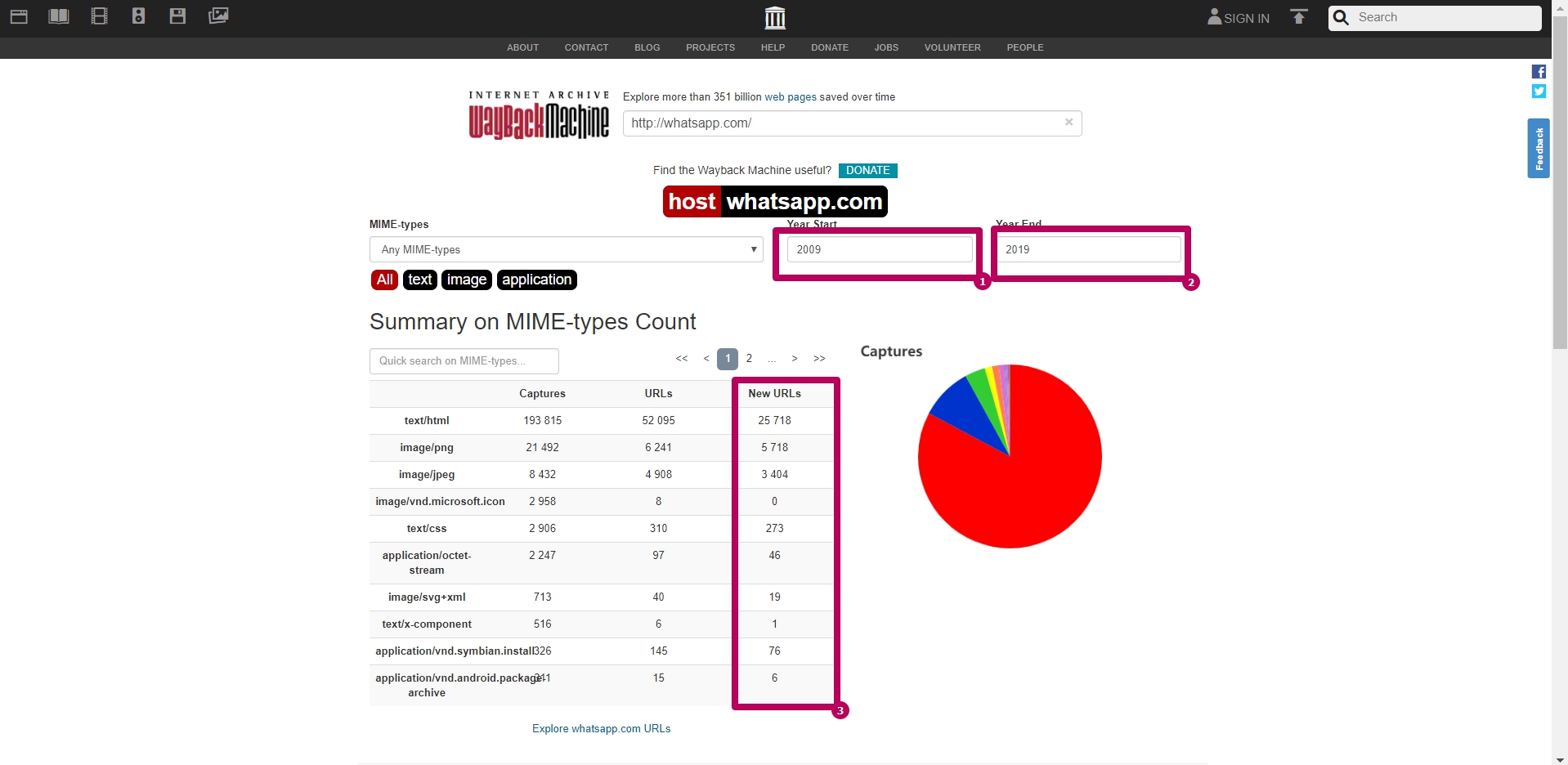

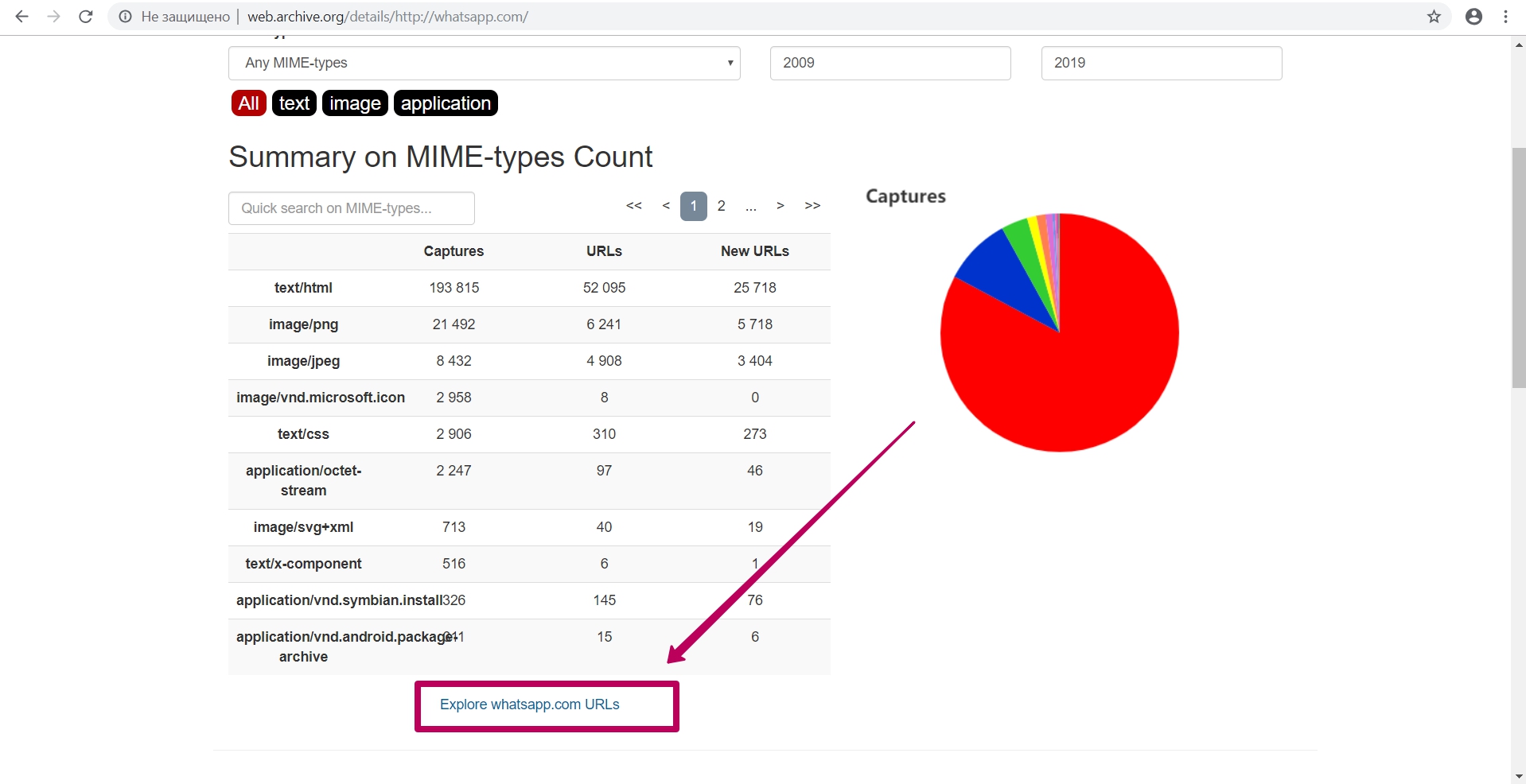

Summary tool

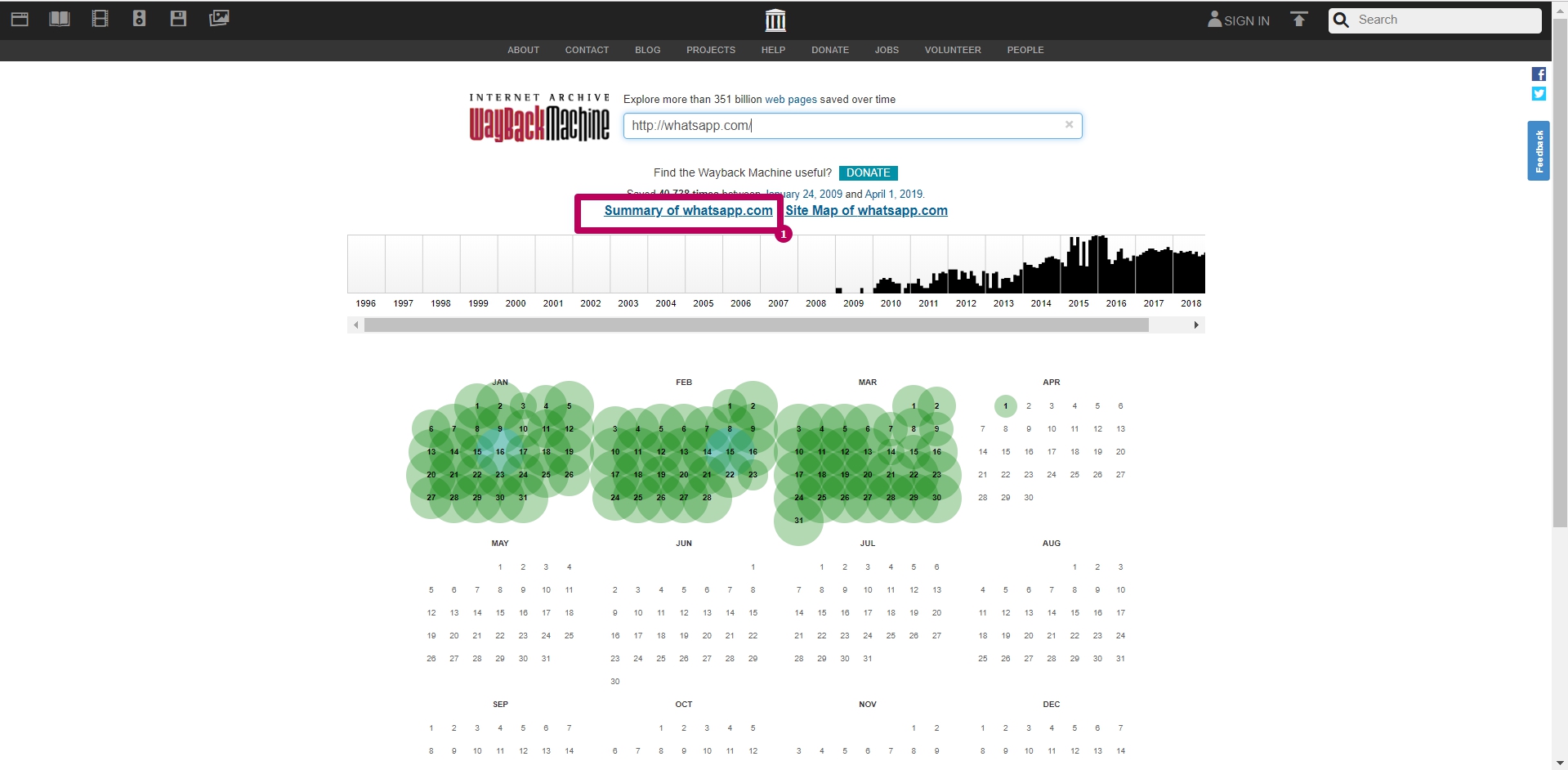

Select Summary tool on web archive main page. These are graphs and charts for website saving. All graphs and tables can be viewed by year.

The most useful information on this page is New URLs column sum. This amount shows us the number of unique files contained in web archive.

Due to the reason that web archive itself could index the page with or without www, this number may be approximate. It means that the same page and its elements can be located at different addresses.

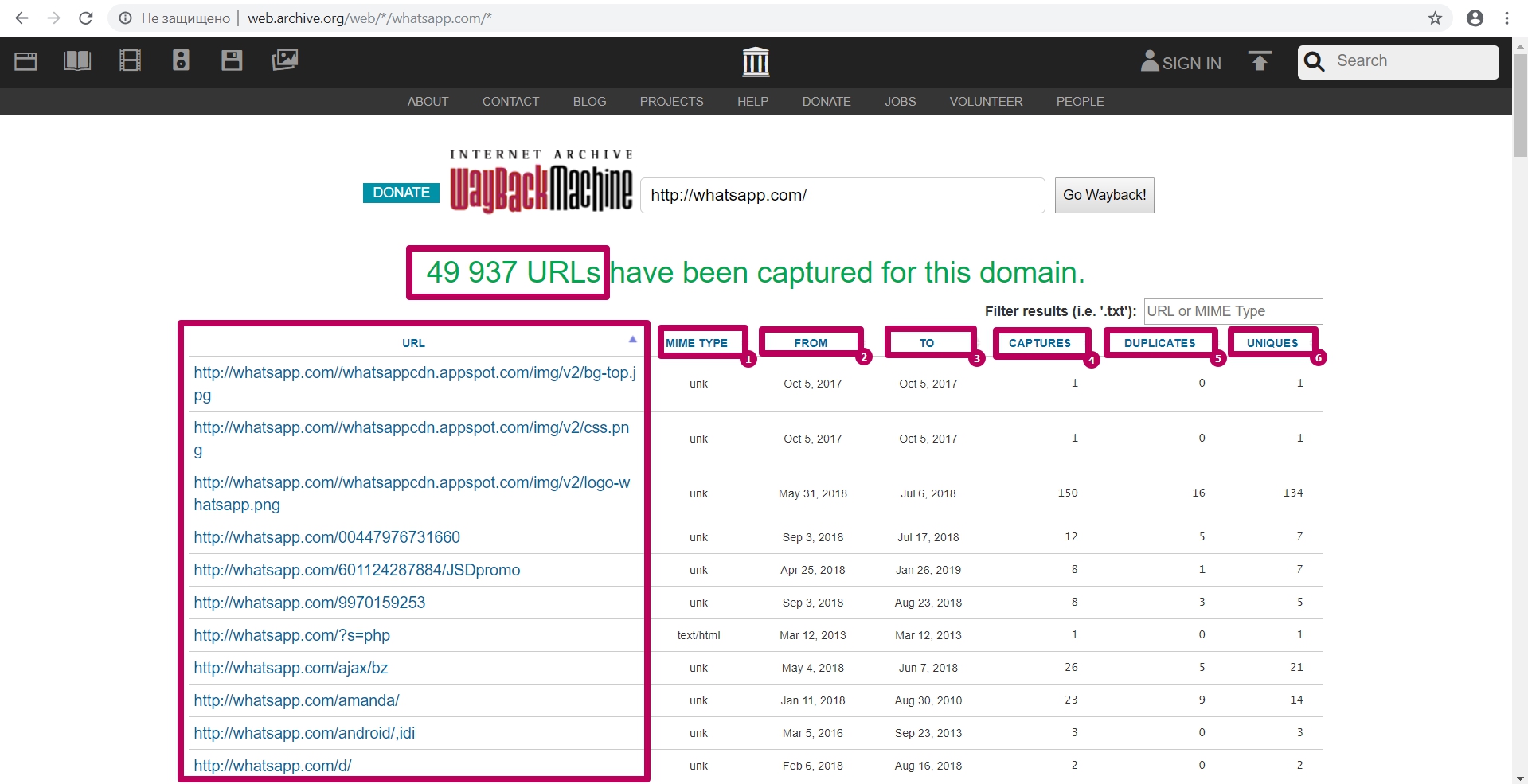

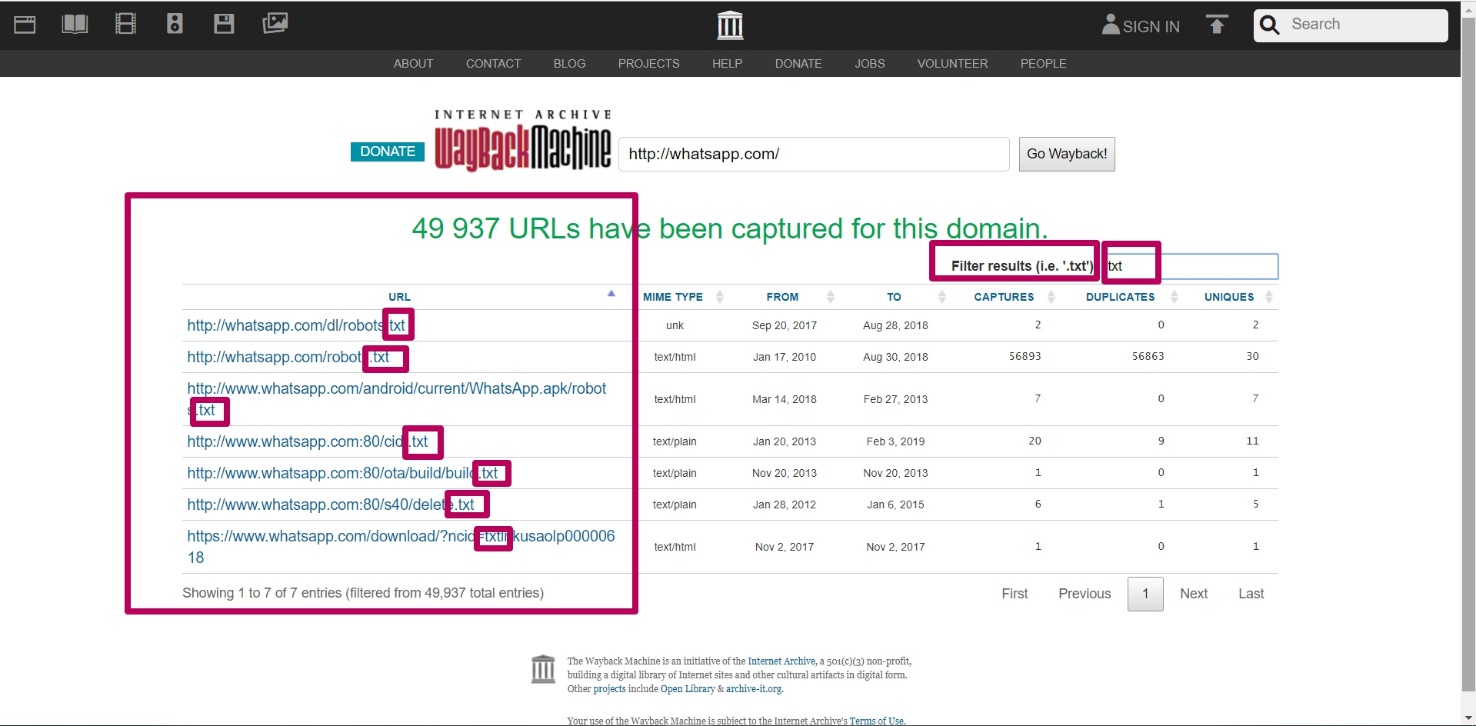

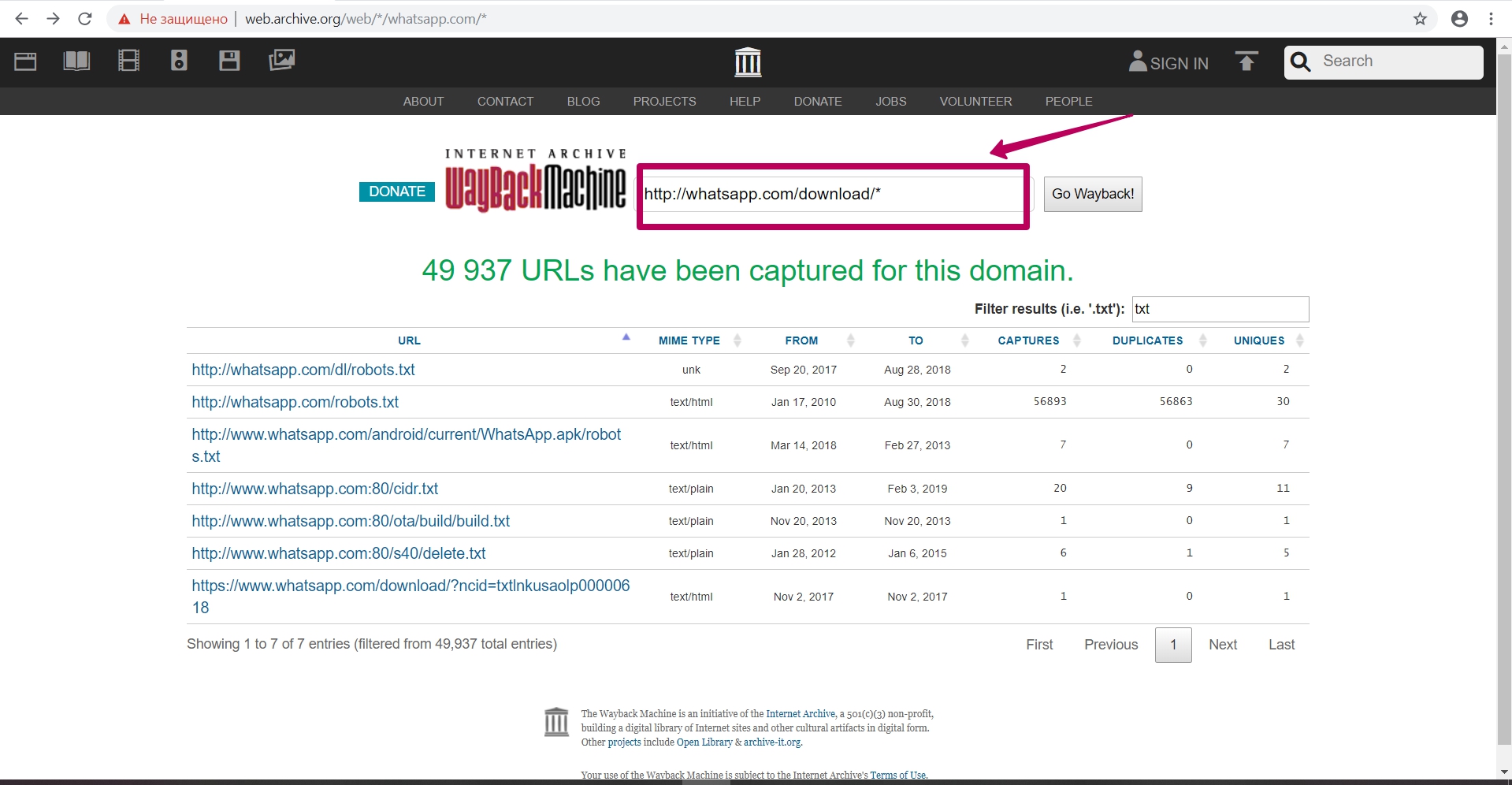

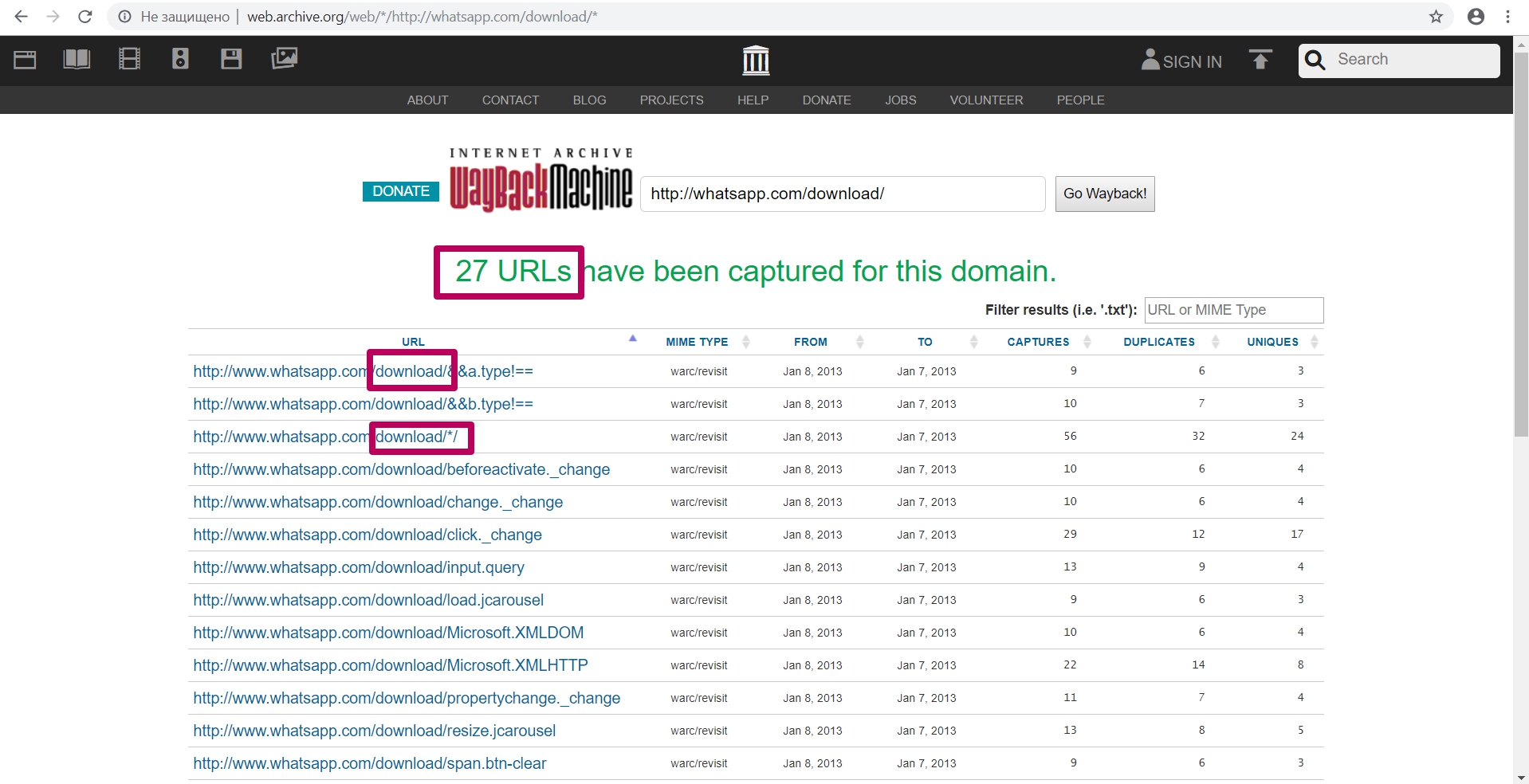

Explore tool

It uploads into the table all the url that were previously indexed by the web archive spider.

Here you can find:

- MIME element type;

- Initial date of element indexing;

- The last date when element was saved;

- Total number of element captures (savings);

- Number of duplicates;

- Number of unique content savings by url.

You can specify any part of the element you are looking for in a filter field: in order to search for website content that is difficult to detect among large number of links.

You can enter part of the path, for example, the path to the folder (required with an asterisk), you can see all the url for the given path (all files from the page or folder) to analyze the indexing of this content.

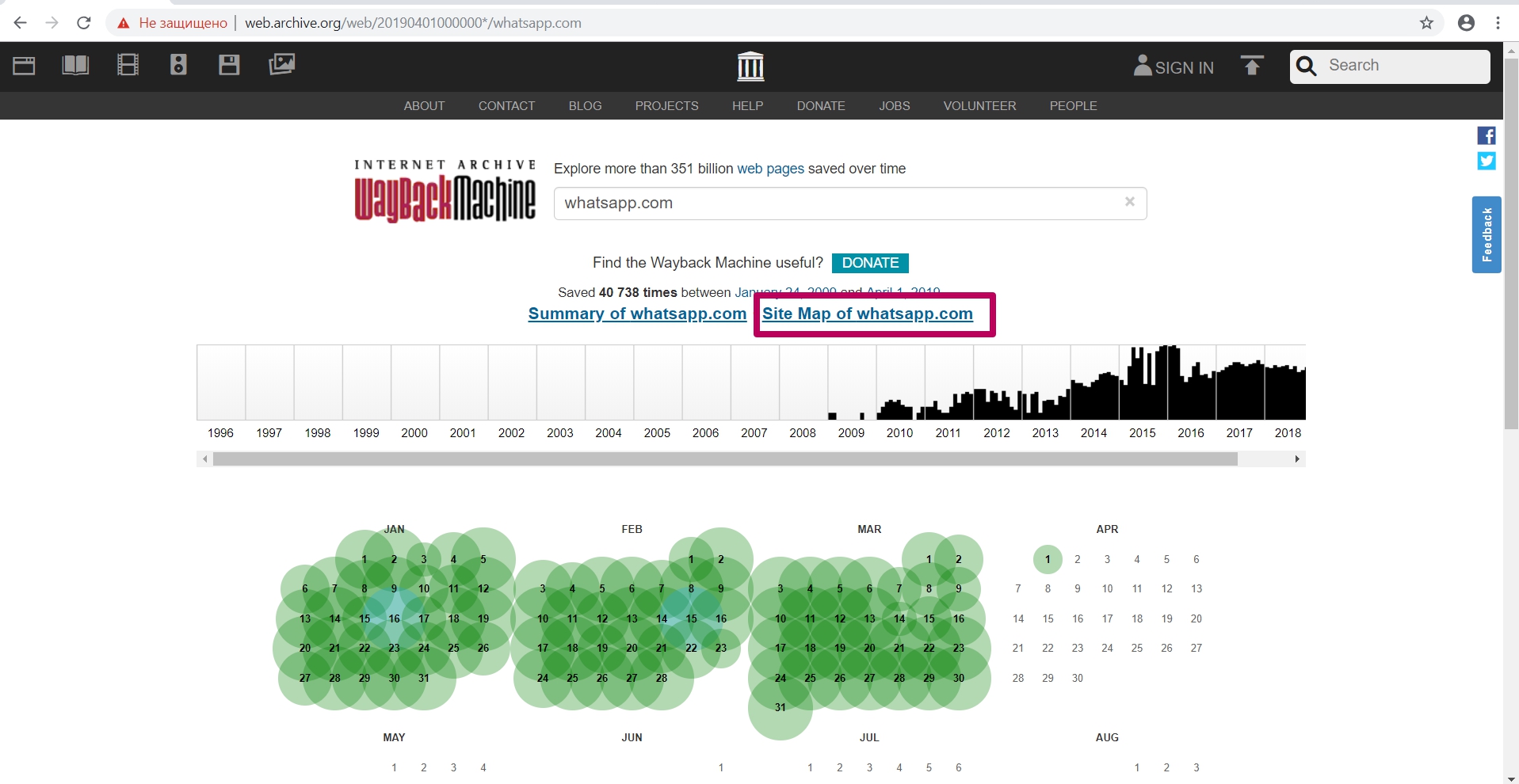

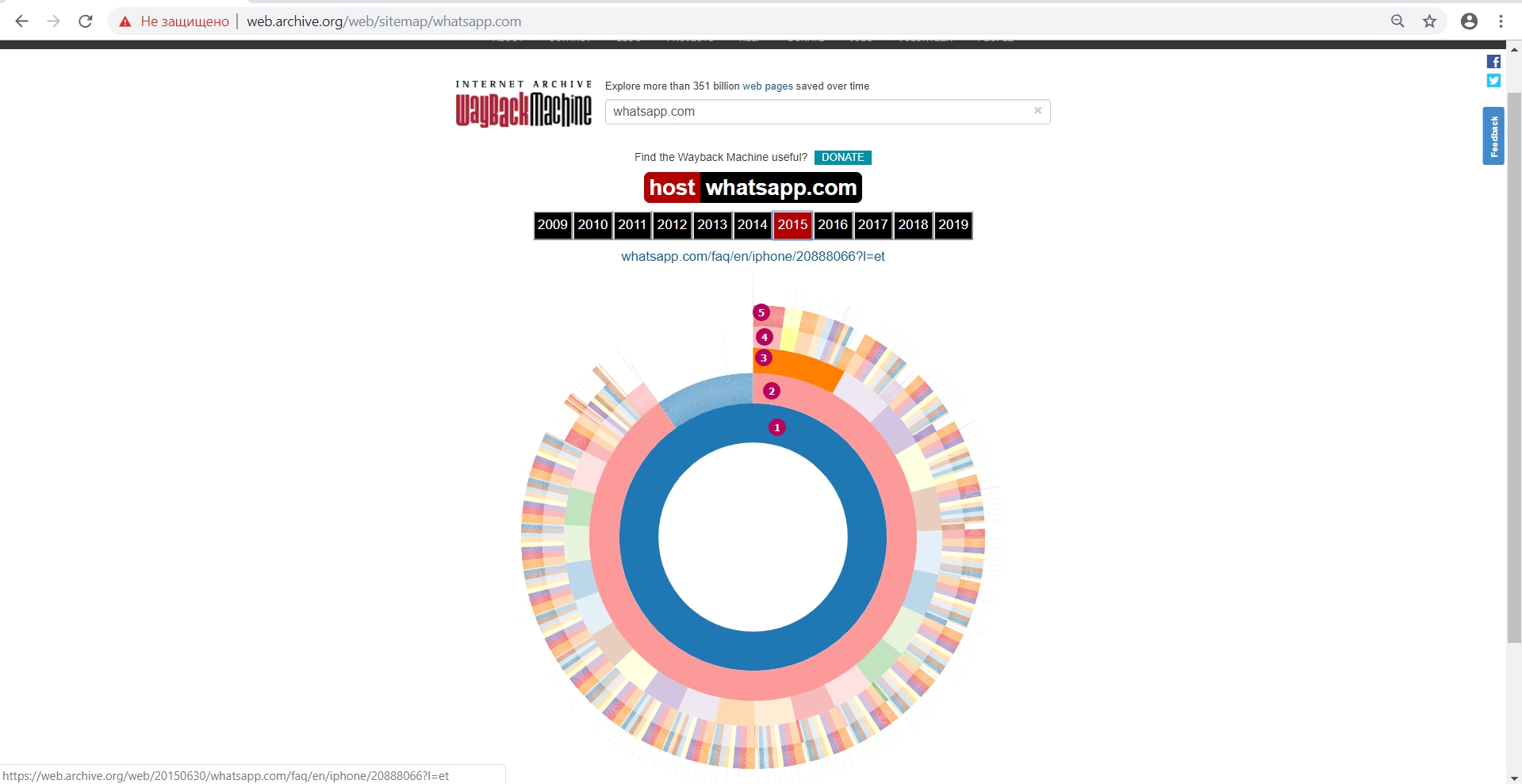

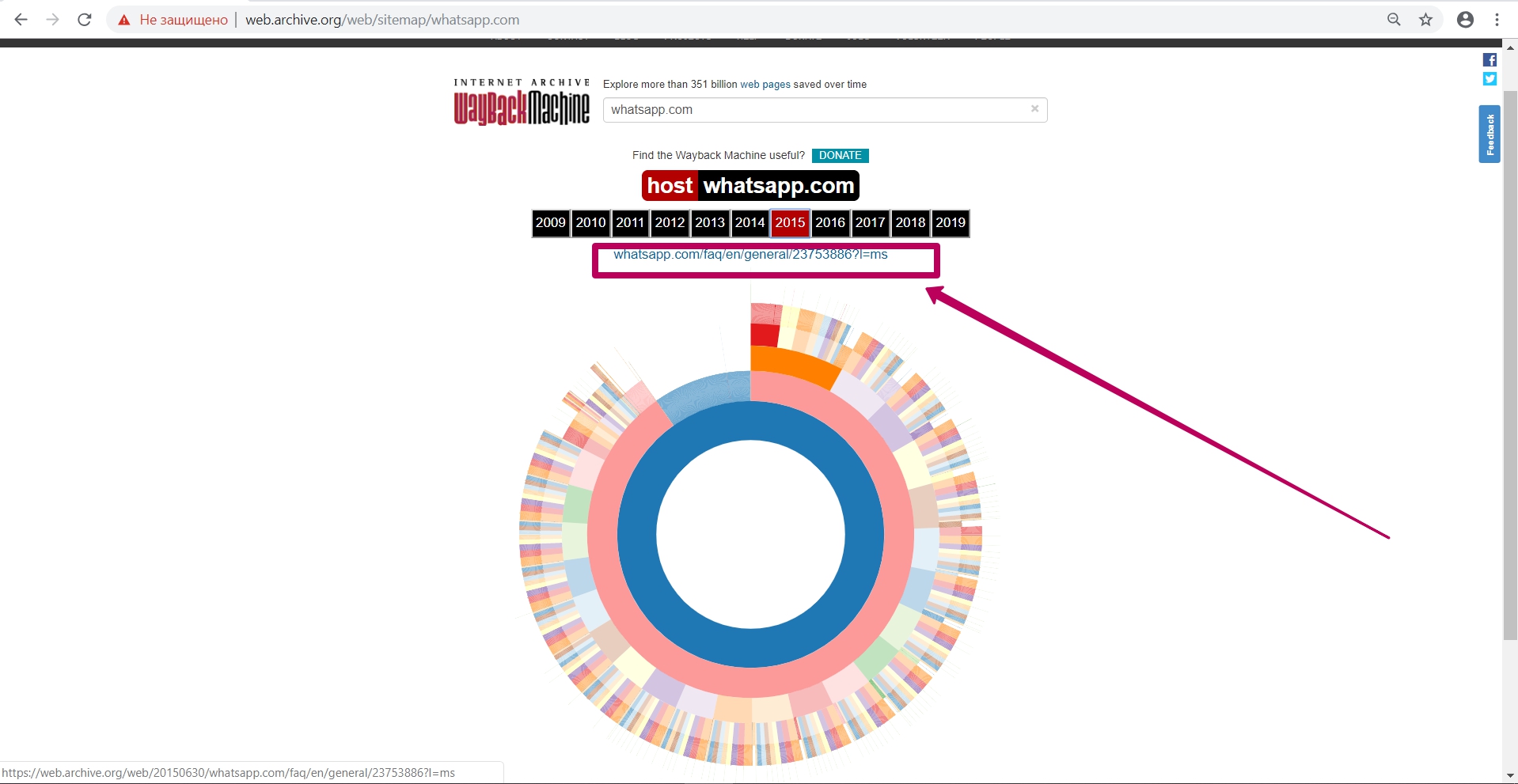

Site Map tool

Click on the appropriate Site Map link on the website main page.

This is a doughnut chart with a separation by year to analyze the elements saved by web archive (which pages) in the context from the main url to the url of the second and n-th level. This tool allows you to determine the year when web archive stopped saving website new content or certain url copies (any response code, except for 200 code).

In the middle of the main page and then along the path structure in the second or third stage, we see website internal pages. There are no other kinds of files, only url saved. It means that we can understand where the archive was able or not to index pages.

Diagram shows:

- Main page

2 - 5. Website pages nesting levels

In addition, by using this tool we can see the internal pages by structure and open them separately in a new tab.

Thus, having selected links to pages and elements with the required date of saving in web archive and having built the structure we need, we can proceed to the next step ― preparing the domain for restoring. But we will talk about this in the next part.

How to restore websites from the Web Archive - archive.org. Part 2

How to restore websites from the Web Archive - archive.org. Part 3

The use of article materials is allowed only if the link to the source is posted: https://archivarix.com/en/blog/1-how-does-it-works-archiveorg/

Web Archive Interface: Instructions for the Summary, Explore, and Site map tools. For reference: Archive.org (Wayback Machine - Internet Archive) was created by Brewster Cale in 1996 about at the same…

In the previous article we examined archive.org operation, and in this article we will talk about a very important stage of site restoring from the Wayback Machine that relates to domain preparation f…

Choosing “BEFORE” limit when restoring websites from archive.org. When domain expires, domain provider or hoster’s parking page may appear. When entering such a page, the Internet Archive will save it…

- Full localization of Archivarix CMS into 13 languages (English, Spanish, Italian, German, French, Portuguese, Polish, Turkish, Japanese, Chinese, Russian, Ukrainian, Belarusian).

- Export all current site data to a zip archive to save a backup or transfer to another site.

- Show and remove broken zip archives in import tools.

- PHP version check during installation.

- Information for installing CMS on a server with NGINX + PHP-FPM.

- In the search, when the expert mode is on, the date/time of the page and a link to its copy in the WebArchive are displayed.

- Improvements to the user interface.

- Code optimization.

If you are a native speaker of a language into which our CMS has not yet been translated, then we invite you to make our product even better. Via Crowdin service you can apply and become our official translator into new languages.

- CLI support to deploy websites right from a command line, imports, settings, stats, history purge and system update.

- Support for password_hash() encrypted passwords that can be used in CLI.

- Expert mode to enable an additional debug information, experimental tools and direct links to WebArchive saved snapshots.

- Tools for broken internal images and links can now return a list of all missing urls instead of deleting.

- Import tool shows corrupted/incomplete zip files that can be removed.

- Improved cookie support to catch up with requirements of modern browsers.

- A setting to select default editor to HTML pages (visual editor or code).

- Changes tab showing text differences is off by default, can be turned on in settings.

- You can roll back to a specific change in the Changes tab.

- Fixed XML sitemap url for websites that are built with a www subdomain.

- Fixed removal temporary files that were created during an installation/import process.

- Faster history purge.

- Femoved unused localization phrases.

- Language switch on the login screen.

- Updated external packages to their most recent versions.

- Optimized memory usage for calculating text differences in the Changes tab.

- Improved support for old versions of php-dom extension.

- An experimental tool to fix file sizes in the database in case you edited files directly on a server.

- An experimental and very raw flat-structure export tool.

- An experimental public key support for the future API features.

- Fixed: History section did not work when there was no zip extension enabled in php.

- New History tab with details of changes when editing text files.

- .htaccess edit tool.

- Ability to clean up backups to the desired rollback point.

- "Missing URLs" section removed from Tools as it is accessible from the dashboard.

- Monitoring and showing free disk space in the dashboard.

- Improved check of the required PHP extensions on startup and initial installation.

- Minor cosmetic changes.

- All external tools updated to latest versions.

- Separate password for safe mode.

- Extended safe mode. Now you can create custom rules and files, but without executable code.

- Reinstalling the site from the CMS without having to manually delete anything from the server.

- Ability to sort custom rules.

- Improved Search & Replace for very large sites.

- Additional settings for the "Viewport meta tag" tool.

- Support for IDN domains on hosting with the old version of ICU.

- In the initial installation with a password, the ability to log out is added.

- If .htaccess is detected during integration with WP, then the Archivarix rules will be added to its beginning.

- When downloading sites by serial number, CDN is used to increase speed.

- Other minor improvements and fixes.

- New dashboard for viewing statistics, server settings and system updates.

- Ability to create templates and conveniently add new pages to the site.

- Integration with Wordpress and Joomla in one click.

- Now in Search & Replace, additional filtering is done in the form of a constructor, where you can add any number of rules.

- Now you can filter the results by domain/subdomains, date-time, file size.

- A new tool to reset the cache in Cloudlfare or enable / disable Dev Mode.

- A new tool for removing versioning in urls, for example, "?ver=1.2.3" in css or js. Allows you to repair even those pages that looked crooked in the WebArchive due to the lack of styles with different versions.

- The robots.txt tool has the ability to immediately enable and add a Sitemap map.

- Automatic and manual creation of rollback points for changes.

- Import can import templates.

- Saving/Importing settings of the loader contains the created custom files.

- For all actions that can last longer than a timeout, a progress bar is displayed.

- A tool to add a viewport meta tag to all pages of a site.

- Tools for removing broken links and images have the ability to account for files on the server.

- A new tool to fix incorrect urlencode links in html code. Rarely, but may come in handy.

- Improved missing urls tool. Together with the new loader, now counts calls to non-existent URLs.

- Regex Tips in Search & Replace.

- Improved checking for missing php extensions.

- Updated all used js tools to the latest versions.

This and many other cosmetic improvements and speed optimizations.