To everyone who has been waiting for top-up discounts!

Dear Archivarix users, Congratulations on the upcoming holidays and thank you for choosing our service to archive and restore your websites!

Read more…

6 Years of Archivarix

It's that special time when we take a moment to reflect not just on our achievements, but also on the incredible journey we've shared with you. This year, Archivarix celebrates its 6th anniversary, and foremost, we want to extend our heartfelt gratitude to you, our dedicated users.

Read more…

Price Changes

On Feb 1st 2023 our prices will change. Activate the promo-code and get a huge bonus in advance.

Read more…

4 years of Archivarix!

It has been four years since we made the Archivarix service public on September 29, 2017. Users make thousands of restorations every day. The number of servers that distribute downloads and processing among themselves consistently exceeds 40, and the system automatically adds new machines under load.

Read more…

What can be recovered from the Web Archive?

Sometimes our users ask why the website was not fully restored? Why the website doesn't it work the way I would like it to? Known issues when restoring sites from archive.org.

Read more…

BLACKFRIDAY

Two big tasty coupons are valid from Friday 27.11.2020 to Monday 30.11.2020. Each of them gives a balance bonus in the form of 20% or 50% of the amount of your last or new payment.

Read more…

Happy 3rd birthday to Archivarix

Three years ago, on September 29, 2017, our archive.org downloader service was launched. All these 3 years we have been continuously developing, we have created our own CMS, a Wordpress plugin, a system for downloading live sites, significantly improved and accelerated the recovery algorithm and much more.

Read more…

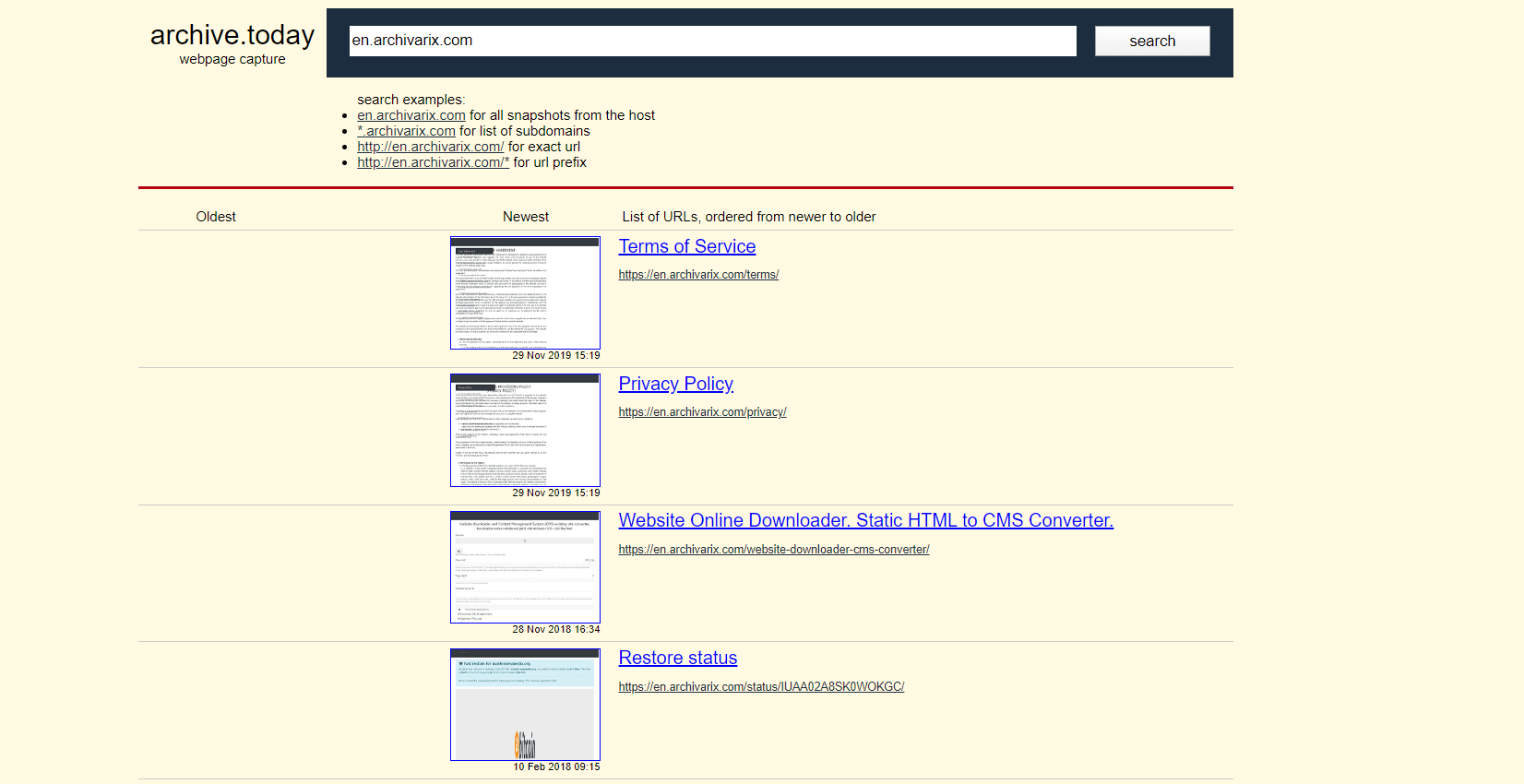

Archivarix.net - Web archive and search engine.

Wayback Machine ( web.archive.org ) Alternative. Internet archive search engine. Find archived copies of websites. Data from 1996. Full-text search.

In the near future, our team plans to launch a unique service that combines the capabilities of the Internet Archive (archive.org) and a search engine.

Read more…

In the near future, our team plans to launch a unique service that combines the capabilities of the Internet Archive (archive.org) and a search engine.

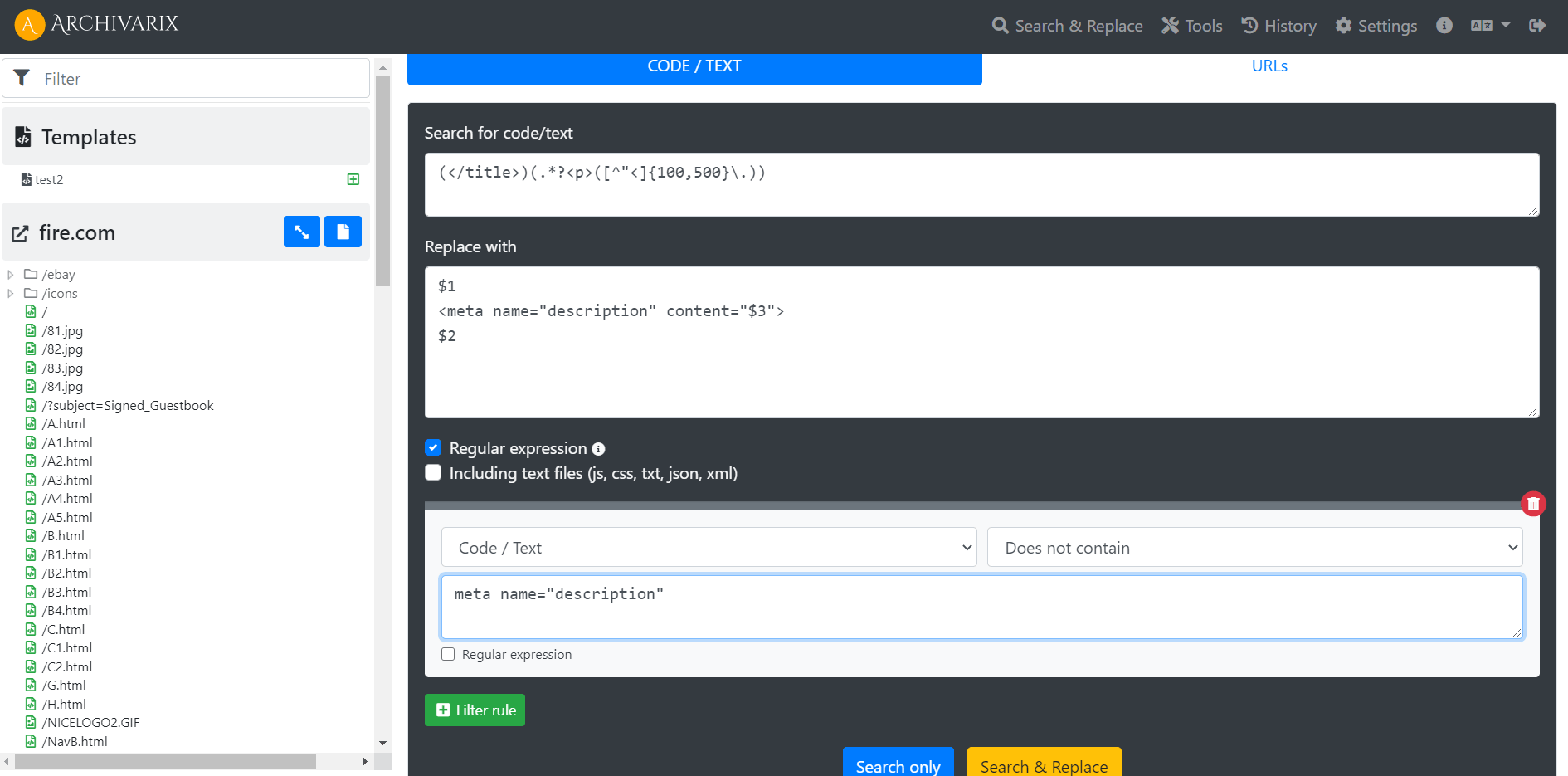

Examples of using regular expressions in Archivarix CMS

How to generate meta name="description" on all pages of a website? How to make the site work not from the root, but from a subdirectory?

Read more…

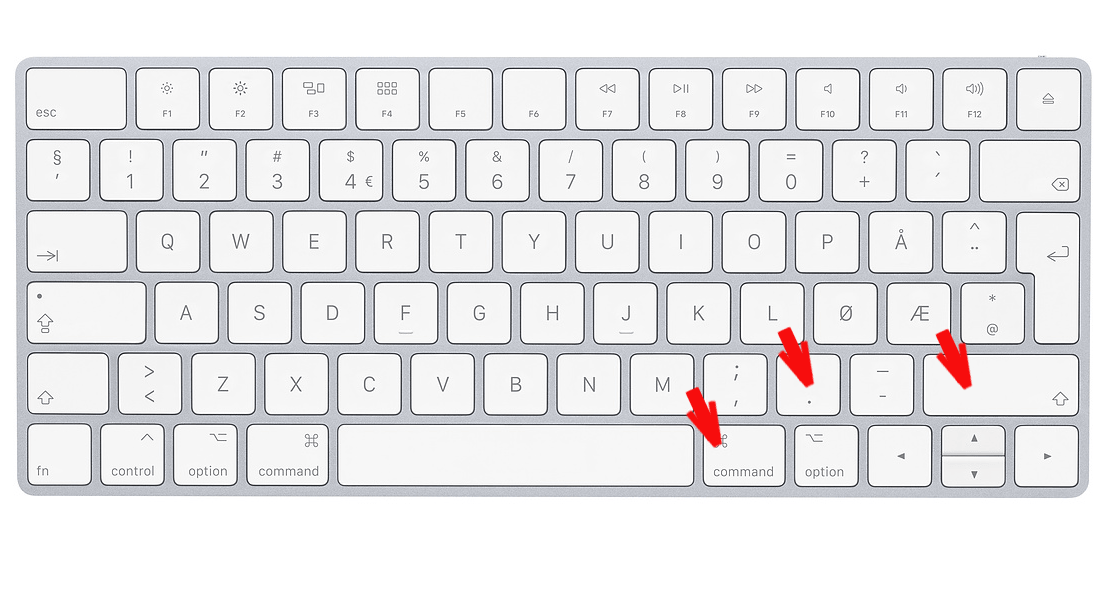

How to show hidden files on macOS.

How to show hidden files on macOS. How to view and edit files starting with dot ( like .htaccess ) in macOS?

Read more…

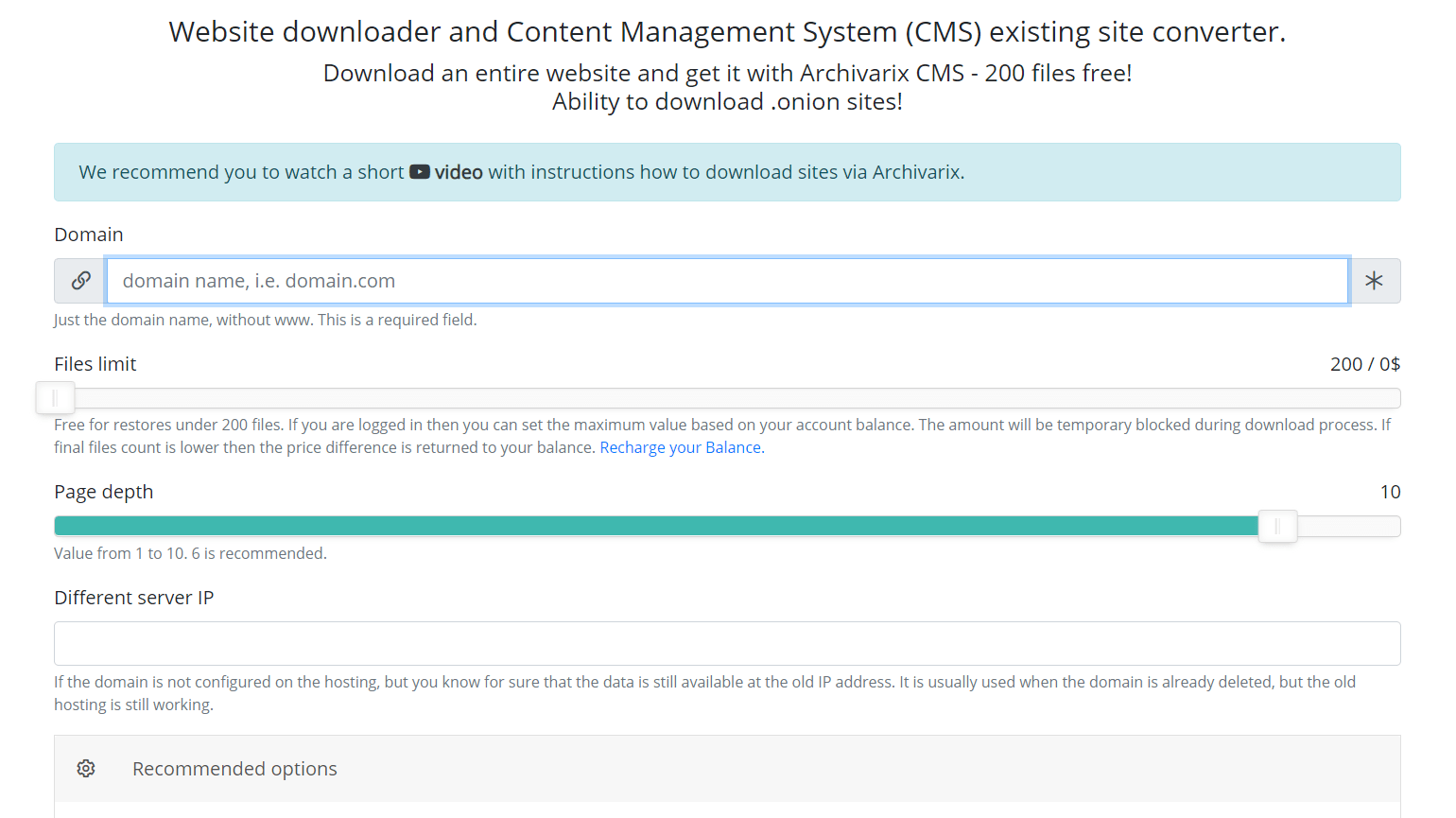

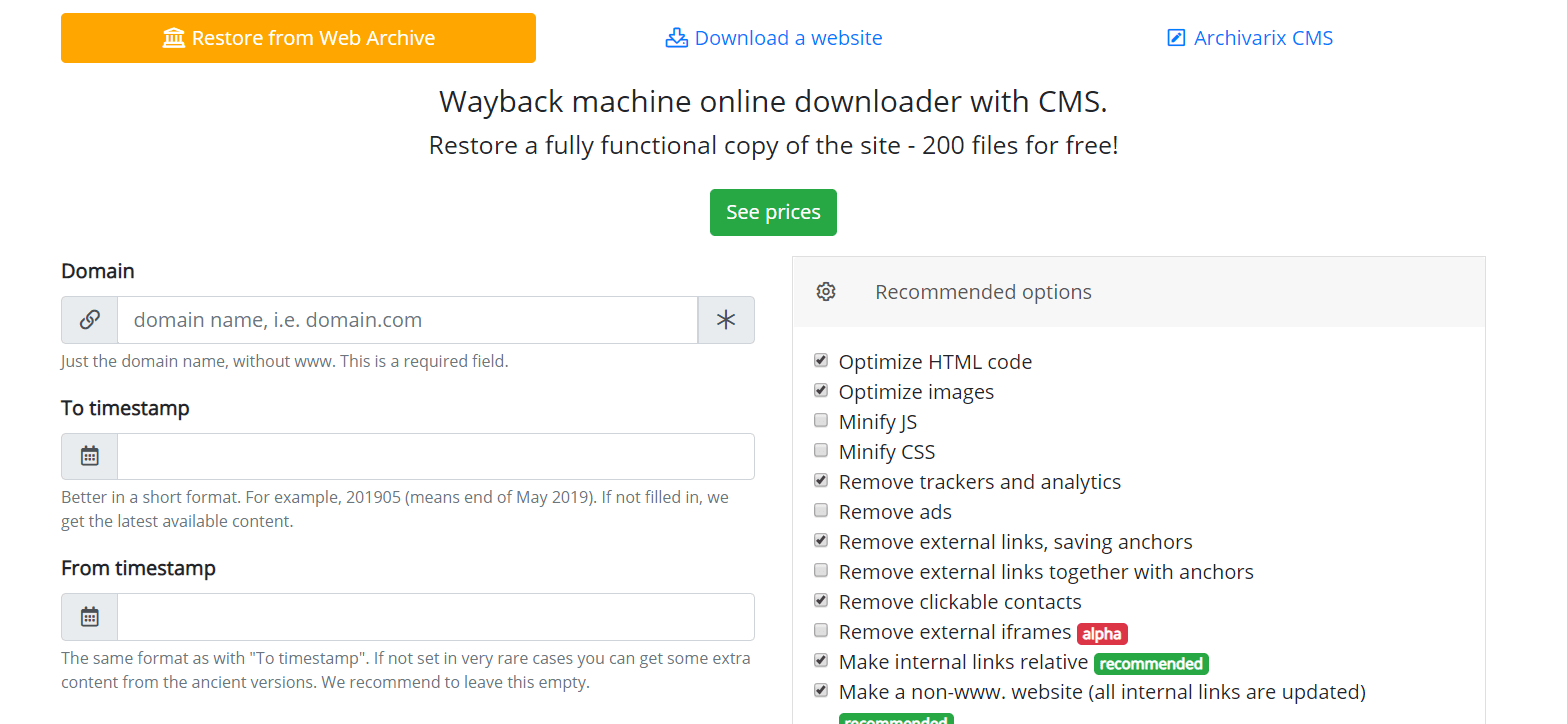

Website downloader. How to choose the files limit?

Our Website downloader system allows you to download up to 200 files from a website for free. If there are more files on the site and you need all of them, then you can pay for this service. Download cost depends on the number of files. How to find out how many files are really on the website and how much it will cost to download them?

Read more…

Regular expressions used in Archivarix CMS

This article describes regular expressions used to search and replace content in websites restored using the Archivarix System. They are not unique to this system. If you know the regular expressions of PHP, Perl, Java or other programming languages, then you already know how to use our search and replace. If not, we hope this article helps you.

Read more…

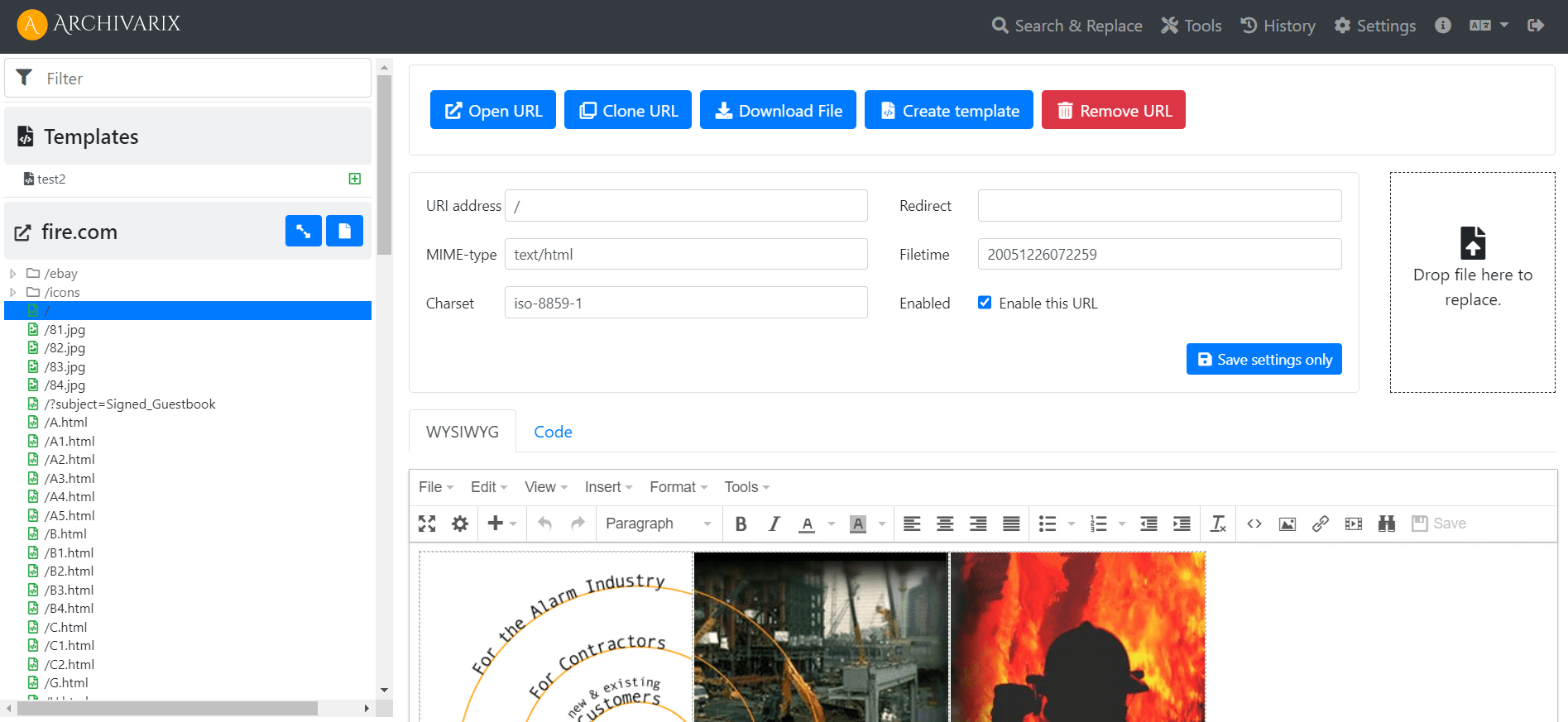

Simple and compact Archivarix CMS. Flat-file CMS for downloaded websites.

In order to make it convenient for you to edit the websites restored in our system, we have developed a simple Flat File CMS consisting of just one small php file. Despite its size, this CMS is a powerful and versatile tool for working with your sites. All the basic features of any CMS are available in it, as well as special features for webmasters creating PBNs based on content restored from the Web Archive.

Read more…

Best Wayback Machine alternatives. How to find deleted websites.

The Wayback Machine is the famous and biggest archive of websites in the world. It has more than 400 billion pages on their servers. Is there any archiving services like Archive.org?

Read more…

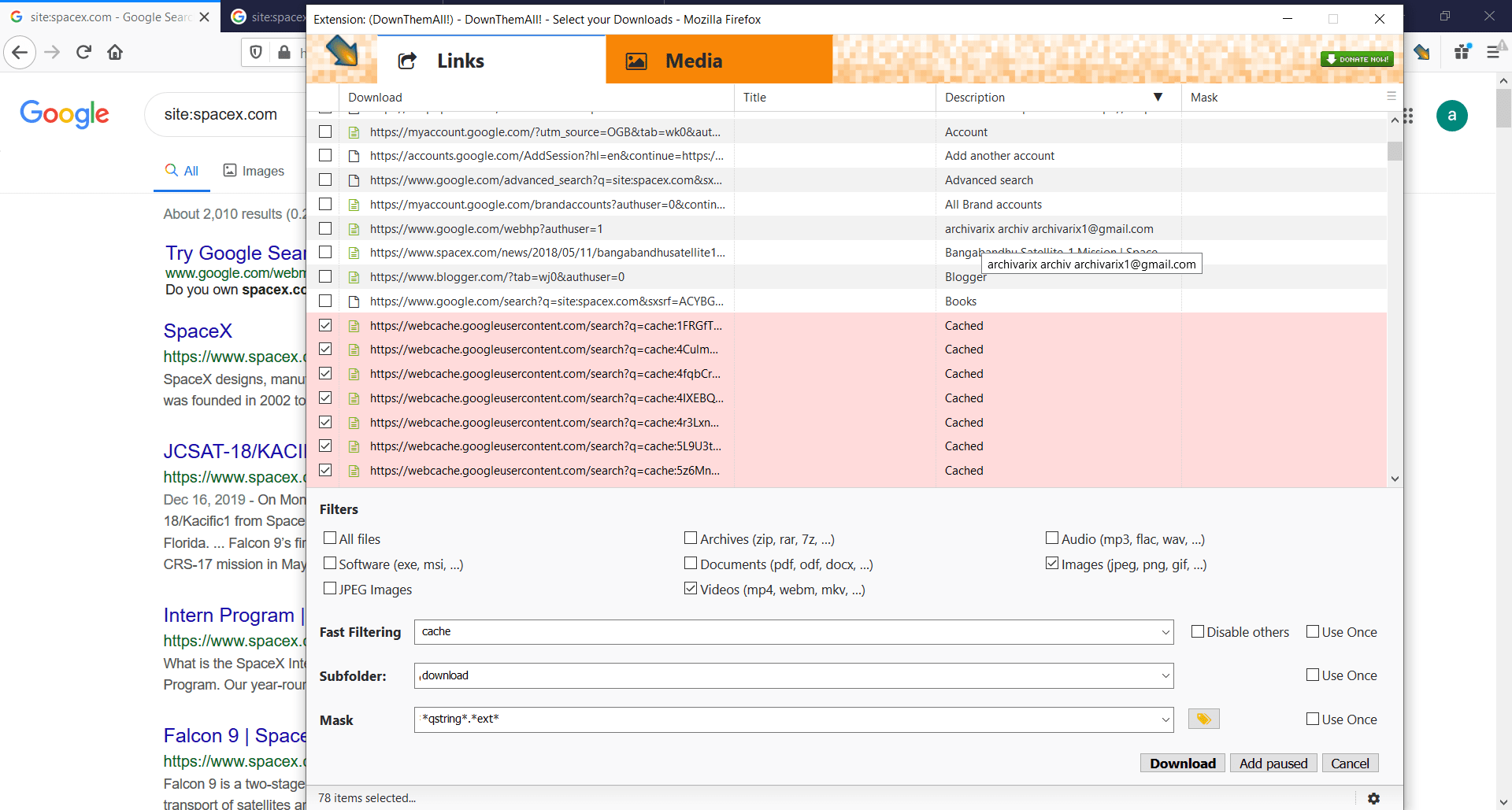

How to download an entire website from Google Cache?

If the website was recently deleted, but the Wayback Machine didn't save the latest version, what you can do to get the content? Google Cache will help you to do this. All you need is to install this plugin -

Read more…

How to recover deleted YouTube videos?

Sometimes you can see this "Video unavailable" message from Youtube. Usually it means that Youtube has deleted this video from their server. But there is an easy way how to get it from the Wayback Machine. Firstly, you need a Youtube video link. It looks like this: https://www.youtube.com/watch?v=1vpS_-nN3JM The last symbols after watch?v= is a code of the video.

Read more…

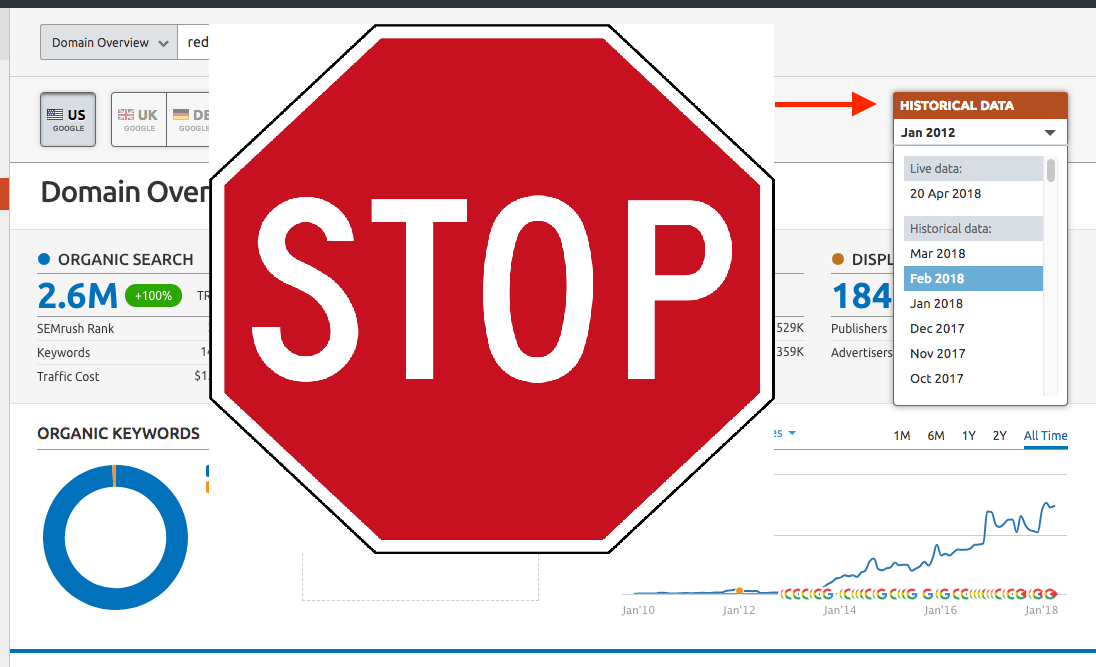

How to hide your backlinks from competitors?

If you are making a PBN (Private Blog Network) , then you probably didn’t really want other webmasters to know where you get your backlinks. A large number of services are involved in backlink and keyword analysis, the most famous of which are Majestic, Ahrefs, Semrush. All of them have their own bots that can be blocked. One way is to write Disallow rules for these bots in the robots.txt file, but then this file will be visible to everyone, and this may turn out to be one of the footprints by which you can identify the website as a part of your PBN competitor backlink analysis.

Read more…

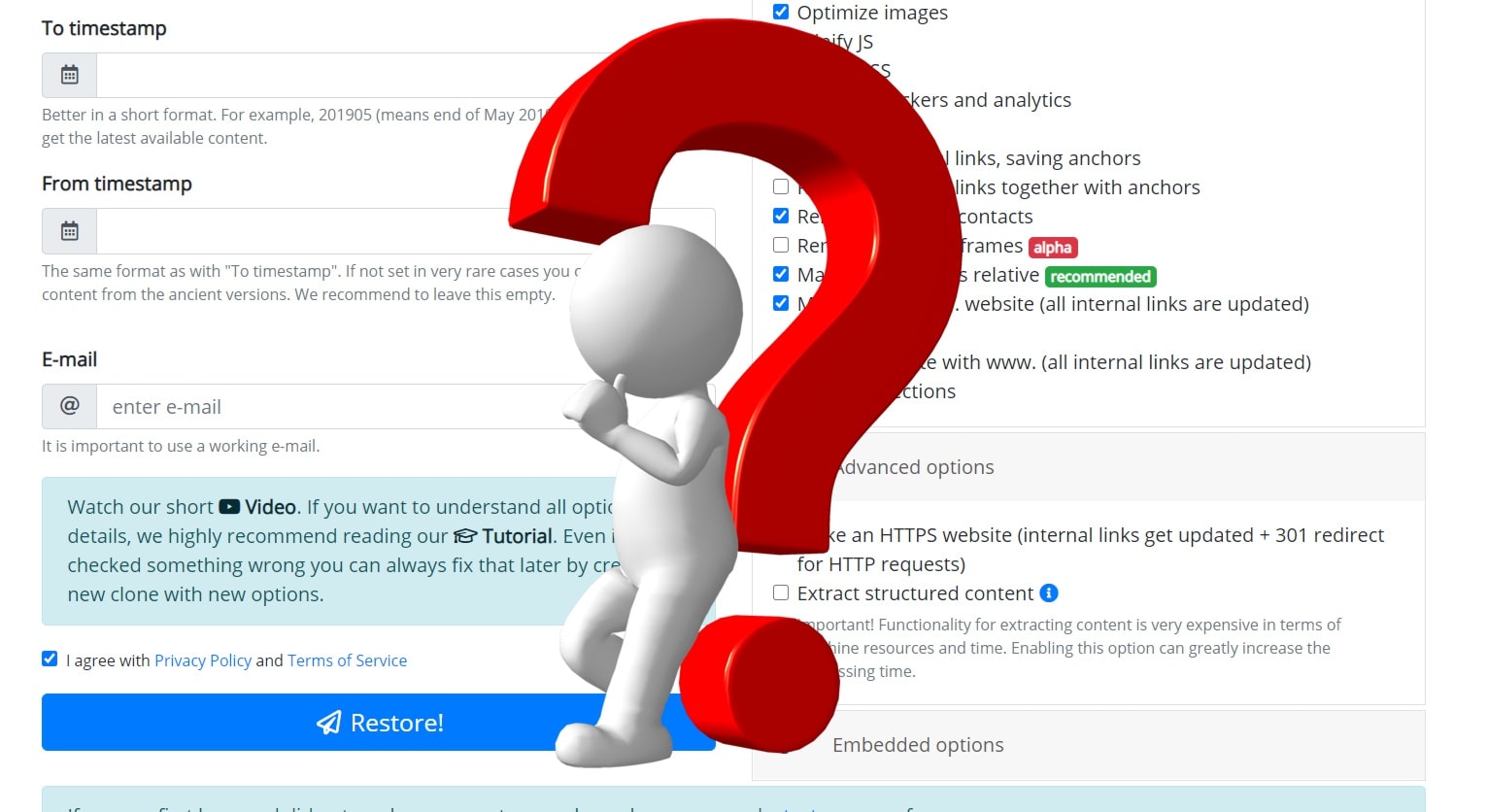

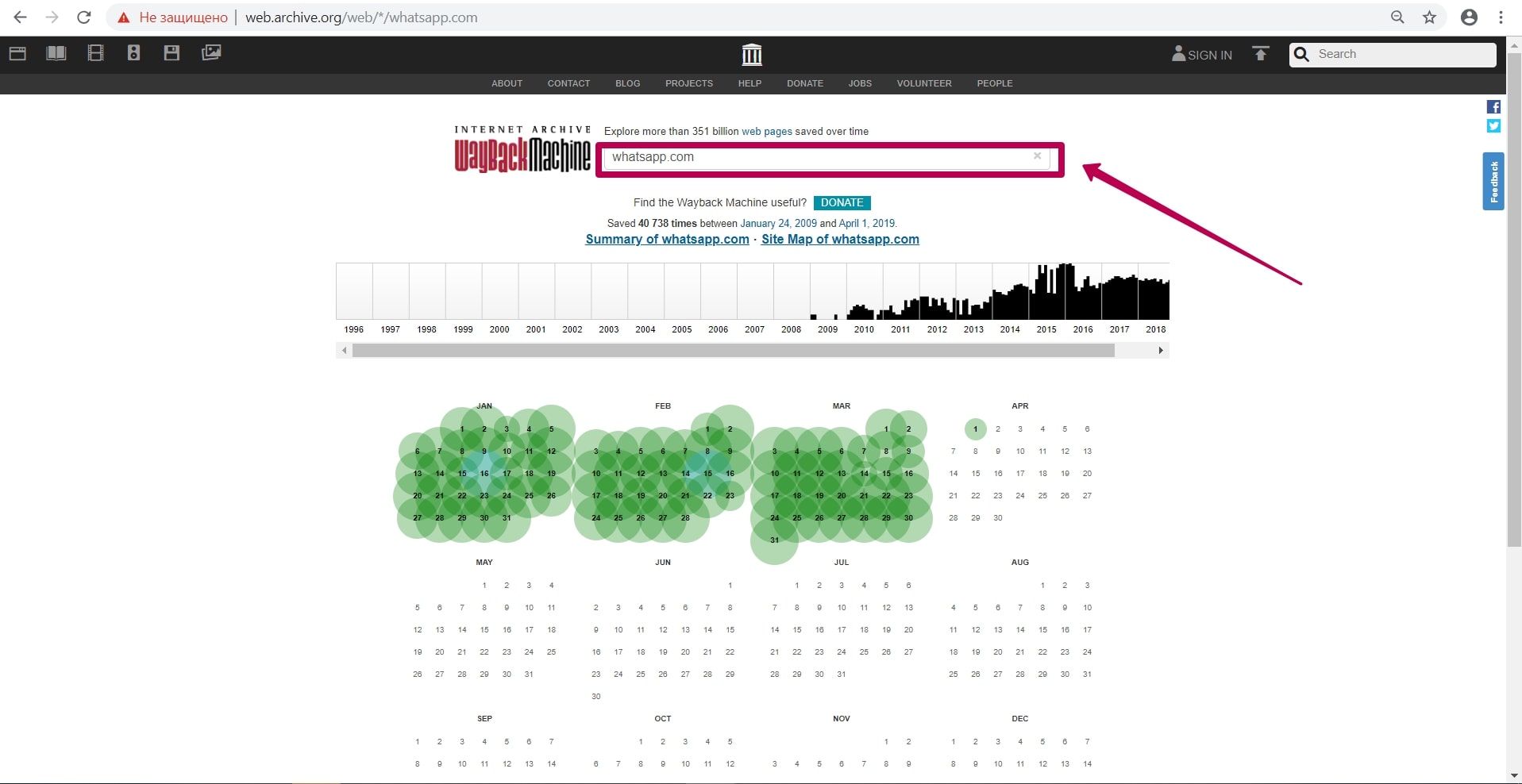

How to restore websites from the Web Archive - archive.org. Part 3

Choosing “BEFORE” limit when restoring websites from archive.org. When domain expires, domain provider or hoster’s parking page may appear. When entering such a page, the Internet Archive will save it as fully operational one, displaying the relevant information on the calendar. If you restore a website from the calendar by such a date, then instead of a normal page will see that mentioned parking page. How can I avoid such a problem and find out the working date of all website pages in order to restore it?

Read more…

How to restore websites from the Web Archive - archive.org. Part 2

In the previous article we examined archive.org operation, and in this article we will talk about a very important stage of site restoring from the Wayback Machine that relates to domain preparation for restoring. This step gives confidence that you will restore the maximum content on your website.

Read more…

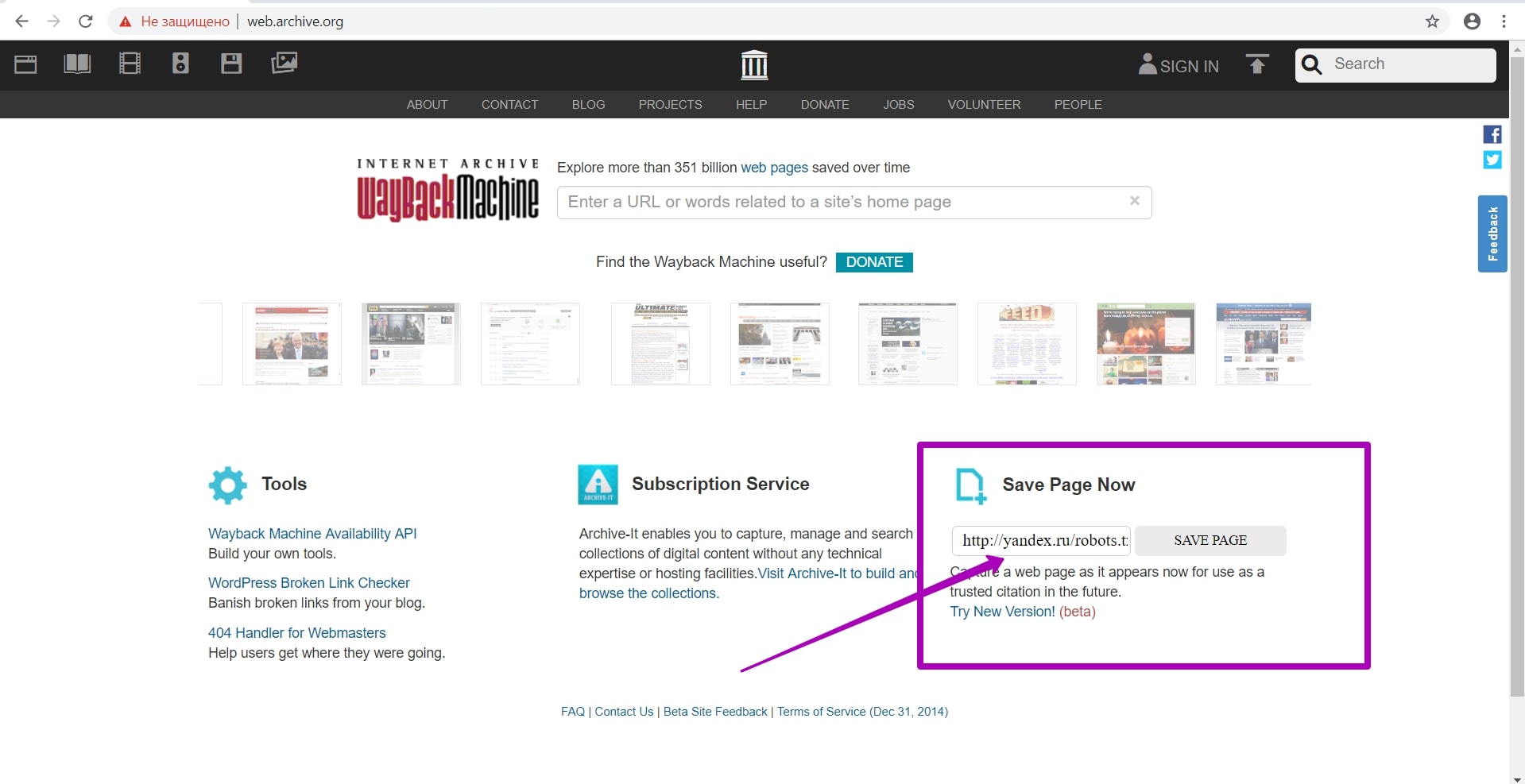

How to restore websites from the Web Archive - archive.org. Part 1

Web Archive Interface: Instructions for the Summary, Explore, and Site map tools. For reference: Archive.org (Wayback Machine - Internet Archive) was created by Brewster Cale in 1996 about at the same time when he founded Alexa Internet, a company that collects statistics on website traffic. In October of that year, company started archiving and storing copies of web pages.

Read more…

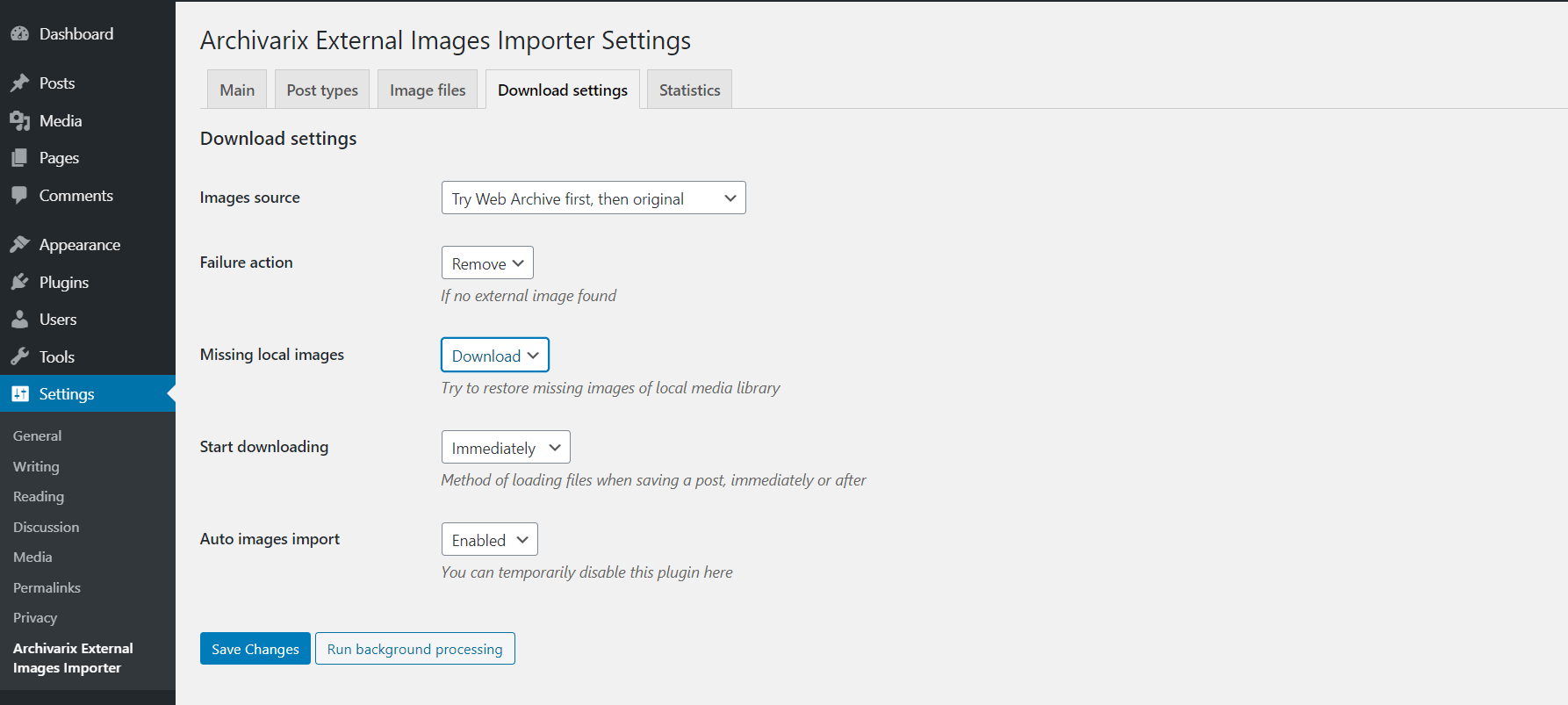

How to transfer content from the Wayback Machine (archive.org) to Wordpress?

By using the “Extract structured content” option you can easily make a Wordpress blog both from the site found on the Web Archive and from any other website. To do this, firstly find the source website and then in one of our download tools "Restore A Website" or "Download A Website" check in "Advanced Options" - "Extract structured content". After that enter all your data ( email, timestamps, etc. ) and start downloading.

Read more…

How does Archivarix work?

The Archivarix system is designed to download and restore sites that are no longer accessible from Web Archive, and those that are currently online. This is the main difference from the rest of “downloaders” and “site parsers”. Archivarix goal is not only to download, but also to restore the website in a form that it will be accessible on your server.

Let's start with the module that downloads websites from Web Archive. These are virtual servers located in California. Their location was chosen in such a way as to obtain the maximum possible connection speed with the Web Archive itself, because its servers are located in San Francisco. After entering data in the appropriate field on the module’s page https://en.archivarix.com/restore/, it takes a screenshot of the archived website and addresses the Web Archive API to request a list of files contained on the specified recovery date

Read more…

Let's start with the module that downloads websites from Web Archive. These are virtual servers located in California. Their location was chosen in such a way as to obtain the maximum possible connection speed with the Web Archive itself, because its servers are located in San Francisco. After entering data in the appropriate field on the module’s page https://en.archivarix.com/restore/, it takes a screenshot of the archived website and addresses the Web Archive API to request a list of files contained on the specified recovery date

Latest news:

2020.11.03

The new version of CMS has become more convenient and understandable for webmasters from around the world.

- Full localization of Archivarix CMS into 13 languages (English, Spanish, Italian, German, French, Portuguese, Polish, Turkish, Japanese, Chinese, Russian, Ukrainian, Belarusian).

- Export all current site data to a zip archive to save a backup or transfer to another site.

- Show and remove broken zip archives in import tools.

- PHP version check during installation.

- Information for installing CMS on a server with NGINX + PHP-FPM.

- In the search, when the expert mode is on, the date/time of the page and a link to its copy in the WebArchive are displayed.

- Improvements to the user interface.

- Code optimization.

If you are a native speaker of a language into which our CMS has not yet been translated, then we invite you to make our product even better. Via Crowdin service you can apply and become our official translator into new languages.

- Full localization of Archivarix CMS into 13 languages (English, Spanish, Italian, German, French, Portuguese, Polish, Turkish, Japanese, Chinese, Russian, Ukrainian, Belarusian).

- Export all current site data to a zip archive to save a backup or transfer to another site.

- Show and remove broken zip archives in import tools.

- PHP version check during installation.

- Information for installing CMS on a server with NGINX + PHP-FPM.

- In the search, when the expert mode is on, the date/time of the page and a link to its copy in the WebArchive are displayed.

- Improvements to the user interface.

- Code optimization.

If you are a native speaker of a language into which our CMS has not yet been translated, then we invite you to make our product even better. Via Crowdin service you can apply and become our official translator into new languages.

2020.10.06

A new Archivarix CMS version.

- CLI support to deploy websites right from a command line, imports, settings, stats, history purge and system update.

- Support for password_hash() encrypted passwords that can be used in CLI.

- Expert mode to enable an additional debug information, experimental tools and direct links to WebArchive saved snapshots.

- Tools for broken internal images and links can now return a list of all missing urls instead of deleting.

- Import tool shows corrupted/incomplete zip files that can be removed.

- Improved cookie support to catch up with requirements of modern browsers.

- A setting to select default editor to HTML pages (visual editor or code).

- Changes tab showing text differences is off by default, can be turned on in settings.

- You can roll back to a specific change in the Changes tab.

- Fixed XML sitemap url for websites that are built with a www subdomain.

- Fixed removal temporary files that were created during an installation/import process.

- Faster history purge.

- Femoved unused localization phrases.

- Language switch on the login screen.

- Updated external packages to their most recent versions.

- Optimized memory usage for calculating text differences in the Changes tab.

- Improved support for old versions of php-dom extension.

- An experimental tool to fix file sizes in the database in case you edited files directly on a server.

- An experimental and very raw flat-structure export tool.

- An experimental public key support for the future API features.

- CLI support to deploy websites right from a command line, imports, settings, stats, history purge and system update.

- Support for password_hash() encrypted passwords that can be used in CLI.

- Expert mode to enable an additional debug information, experimental tools and direct links to WebArchive saved snapshots.

- Tools for broken internal images and links can now return a list of all missing urls instead of deleting.

- Import tool shows corrupted/incomplete zip files that can be removed.

- Improved cookie support to catch up with requirements of modern browsers.

- A setting to select default editor to HTML pages (visual editor or code).

- Changes tab showing text differences is off by default, can be turned on in settings.

- You can roll back to a specific change in the Changes tab.

- Fixed XML sitemap url for websites that are built with a www subdomain.

- Fixed removal temporary files that were created during an installation/import process.

- Faster history purge.

- Femoved unused localization phrases.

- Language switch on the login screen.

- Updated external packages to their most recent versions.

- Optimized memory usage for calculating text differences in the Changes tab.

- Improved support for old versions of php-dom extension.

- An experimental tool to fix file sizes in the database in case you edited files directly on a server.

- An experimental and very raw flat-structure export tool.

- An experimental public key support for the future API features.

2020.06.08

The first June update of Archivarix CMS with new, convenient features.

- Fixed: History section did not work when there was no zip extension enabled in php.

- New History tab with details of changes when editing text files.

- .htaccess edit tool.

- Ability to clean up backups to the desired rollback point.

- "Missing URLs" section removed from Tools as it is accessible from the dashboard.

- Monitoring and showing free disk space in the dashboard.

- Improved check of the required PHP extensions on startup and initial installation.

- Minor cosmetic changes.

- All external tools updated to latest versions.

- Fixed: History section did not work when there was no zip extension enabled in php.

- New History tab with details of changes when editing text files.

- .htaccess edit tool.

- Ability to clean up backups to the desired rollback point.

- "Missing URLs" section removed from Tools as it is accessible from the dashboard.

- Monitoring and showing free disk space in the dashboard.

- Improved check of the required PHP extensions on startup and initial installation.

- Minor cosmetic changes.

- All external tools updated to latest versions.

2020.05.21

An update that web studios and those using outsourcing will appreciate.

- Separate password for safe mode.

- Extended safe mode. Now you can create custom rules and files, but without executable code.

- Reinstalling the site from the CMS without having to manually delete anything from the server.

- Ability to sort custom rules.

- Improved Search & Replace for very large sites.

- Additional settings for the "Viewport meta tag" tool.

- Support for IDN domains on hosting with the old version of ICU.

- In the initial installation with a password, the ability to log out is added.

- If .htaccess is detected during integration with WP, then the Archivarix rules will be added to its beginning.

- When downloading sites by serial number, CDN is used to increase speed.

- Other minor improvements and fixes.

- Separate password for safe mode.

- Extended safe mode. Now you can create custom rules and files, but without executable code.

- Reinstalling the site from the CMS without having to manually delete anything from the server.

- Ability to sort custom rules.

- Improved Search & Replace for very large sites.

- Additional settings for the "Viewport meta tag" tool.

- Support for IDN domains on hosting with the old version of ICU.

- In the initial installation with a password, the ability to log out is added.

- If .htaccess is detected during integration with WP, then the Archivarix rules will be added to its beginning.

- When downloading sites by serial number, CDN is used to increase speed.

- Other minor improvements and fixes.

2020.05.12

Our Archivarix CMS is developing by leaps and bounds. The new update, in which the following appeared:

- New dashboard for viewing statistics, server settings and system updates.

- Ability to create templates and conveniently add new pages to the site.

- Integration with Wordpress and Joomla in one click.

- Now in Search & Replace, additional filtering is done in the form of a constructor, where you can add any number of rules.

- Now you can filter the results by domain/subdomains, date-time, file size.

- A new tool to reset the cache in Cloudlfare or enable / disable Dev Mode.

- A new tool for removing versioning in urls, for example, "?ver=1.2.3" in css or js. Allows you to repair even those pages that looked crooked in the WebArchive due to the lack of styles with different versions.

- The robots.txt tool has the ability to immediately enable and add a Sitemap map.

- Automatic and manual creation of rollback points for changes.

- Import can import templates.

- Saving/Importing settings of the loader contains the created custom files.

- For all actions that can last longer than a timeout, a progress bar is displayed.

- A tool to add a viewport meta tag to all pages of a site.

- Tools for removing broken links and images have the ability to account for files on the server.

- A new tool to fix incorrect urlencode links in html code. Rarely, but may come in handy.

- Improved missing urls tool. Together with the new loader, now counts calls to non-existent URLs.

- Regex Tips in Search & Replace.

- Improved checking for missing php extensions.

- Updated all used js tools to the latest versions.

This and many other cosmetic improvements and speed optimizations.

- New dashboard for viewing statistics, server settings and system updates.

- Ability to create templates and conveniently add new pages to the site.

- Integration with Wordpress and Joomla in one click.

- Now in Search & Replace, additional filtering is done in the form of a constructor, where you can add any number of rules.

- Now you can filter the results by domain/subdomains, date-time, file size.

- A new tool to reset the cache in Cloudlfare or enable / disable Dev Mode.

- A new tool for removing versioning in urls, for example, "?ver=1.2.3" in css or js. Allows you to repair even those pages that looked crooked in the WebArchive due to the lack of styles with different versions.

- The robots.txt tool has the ability to immediately enable and add a Sitemap map.

- Automatic and manual creation of rollback points for changes.

- Import can import templates.

- Saving/Importing settings of the loader contains the created custom files.

- For all actions that can last longer than a timeout, a progress bar is displayed.

- A tool to add a viewport meta tag to all pages of a site.

- Tools for removing broken links and images have the ability to account for files on the server.

- A new tool to fix incorrect urlencode links in html code. Rarely, but may come in handy.

- Improved missing urls tool. Together with the new loader, now counts calls to non-existent URLs.

- Regex Tips in Search & Replace.

- Improved checking for missing php extensions.

- Updated all used js tools to the latest versions.

This and many other cosmetic improvements and speed optimizations.